Adtriba Accelerates and Advances Media Mix Modeling Using the Anyscale Fully-Managed Ray Platform

From 10,000 model iterations that would otherwise take a week to under 3 hours and advanced insights on the impact of media investments.

Adtriba is a Marketing Measurement company focused on helping businesses holistically evaluate their marketing and optimize budget allocation. Adtriba enables modern media measurement centered around 3 major pillars that rely on AI:

Advanced Multi-Touch Attribution: Leverages detailed click through data and user-level data in order to allocate credit along the customer journey and provide granular, campaign-level insights.

Media Mix Modeling: Provides a more high-level strategic view by using aggregated data and time-series regression models in order to establish a relationship between media (on- and offline) input and commercial output.

Experiments (incrementality tests): Used to validate the learnings from both models

LinkThe Challenge

While each of these methods are useful in isolation, they work best when combined into a holistic measurement system. While multi-touch attribution is often solved using classification algorithms and tests can be analyzed using various frameworks such as Metas GeoLift, Media Mix Modeling, it demands more sophisticated AI/ML models to overcome the typical challenges and adhere to certain requirements.

Media Mix Models are typically used to:

Quantify the impact of marketing over time

Use the fitted model to plug into a solver for optimized budget allocation

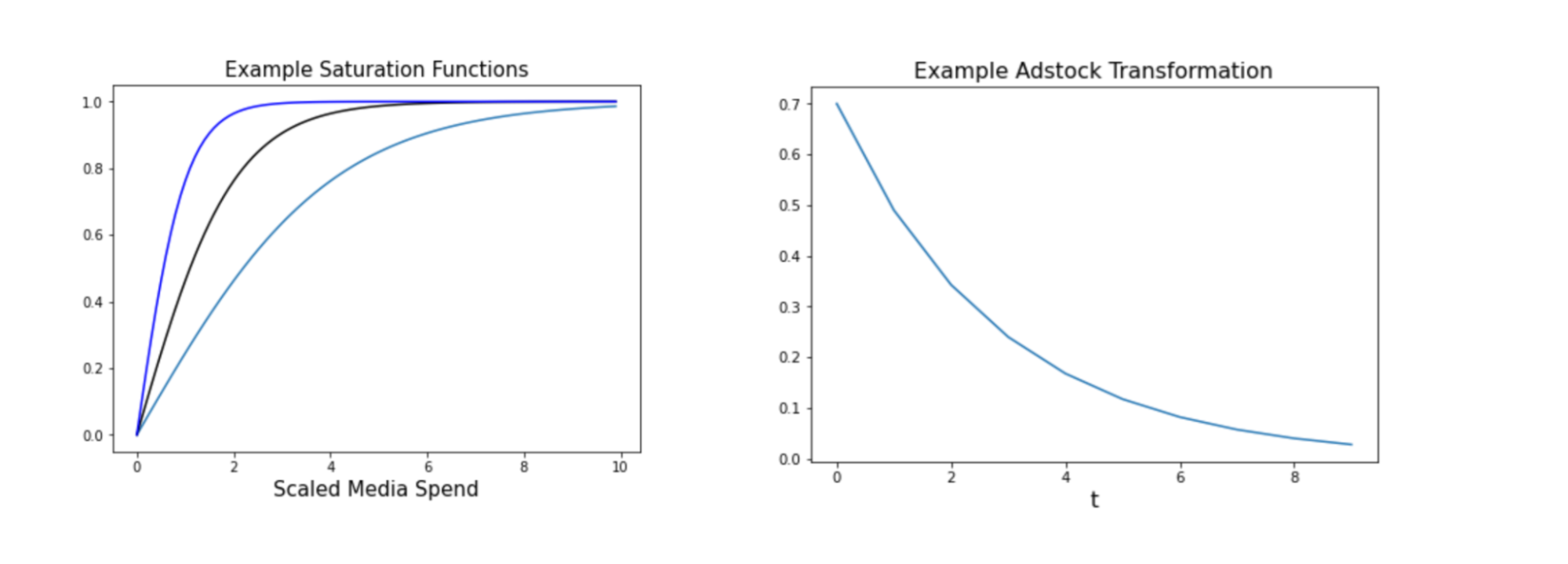

In order to fit a realistic and “useful” model, at Adtriba we cannot simply leverage any black-box ML algorithm, but rather need to conform to more explicit functional forms. Specifically, we need to build models that can assess and address the carry-over, shape effects and ongoing trends of the media response functions. Other than that, we need to assign constraints - media should not have a negative effect, and pricing is not always a positive effect (usually, hi Gucci, Apple…). Models also need, to address changing media efficiency over time. So what’s needed is a modeling solution that allows us to assign constraints, model adstock, and/or carryover effects to also assess the delayed media impact over time, the saturation, or shape, of the media effect.

While there are a few ways to achieve these AI modeling objectives, the dominant methods are:

Non-linear optimization - here, one writes a custom non-linear program in order to find the “optimal” points

Bayesian inference – as popularized by Google in it’s seminal “Bayesian Inference for Media Mix Modeling using Carryover and Shape effects”

Hyperparameter optimization - as popularized by the Metas Robyn package

In addition to these there are other models which mainly relate to the various challenges and biases we need to address when trying to derive causal conclusions from observational data. For an in-depth discussion of these, refer to an excellent paper published by Google called “Challenges and Opportunities in Media Mix Modeling”.

Advanced AI-driven Media Mix Modeling can greatly increase the accuracy of MMMs by calibrating it with external results and benchmarks. These can - ideally - come from incrementality tests. Other sources on the measurement stack - like MTA - can be useful too. Even platform-reported attributions might be useful as a reasonable upper bound.

LinkThe Anyscale Platform and Ray

At Adtriba we develop and maintain our own custom Media Mix Modeling packages that includes models based on different types of “base” models, including Ubers Orbit, Numpyro and even scikit-learn. While certain frameworks have certain advantages and disadvantages, we don’t want to be limited to any one solution, but always leverage the model - or combination of models - that is best suited to the dataset / modeling task at hand. Additionally, we wanted to include intuitive calibration capabilities, by minimizing the distance between a “ground” or "external" source of truth. This is where the Anyscale Platform built on Ray and Ray’s multi-objective hyperparameter search algorithms provide an advantage.

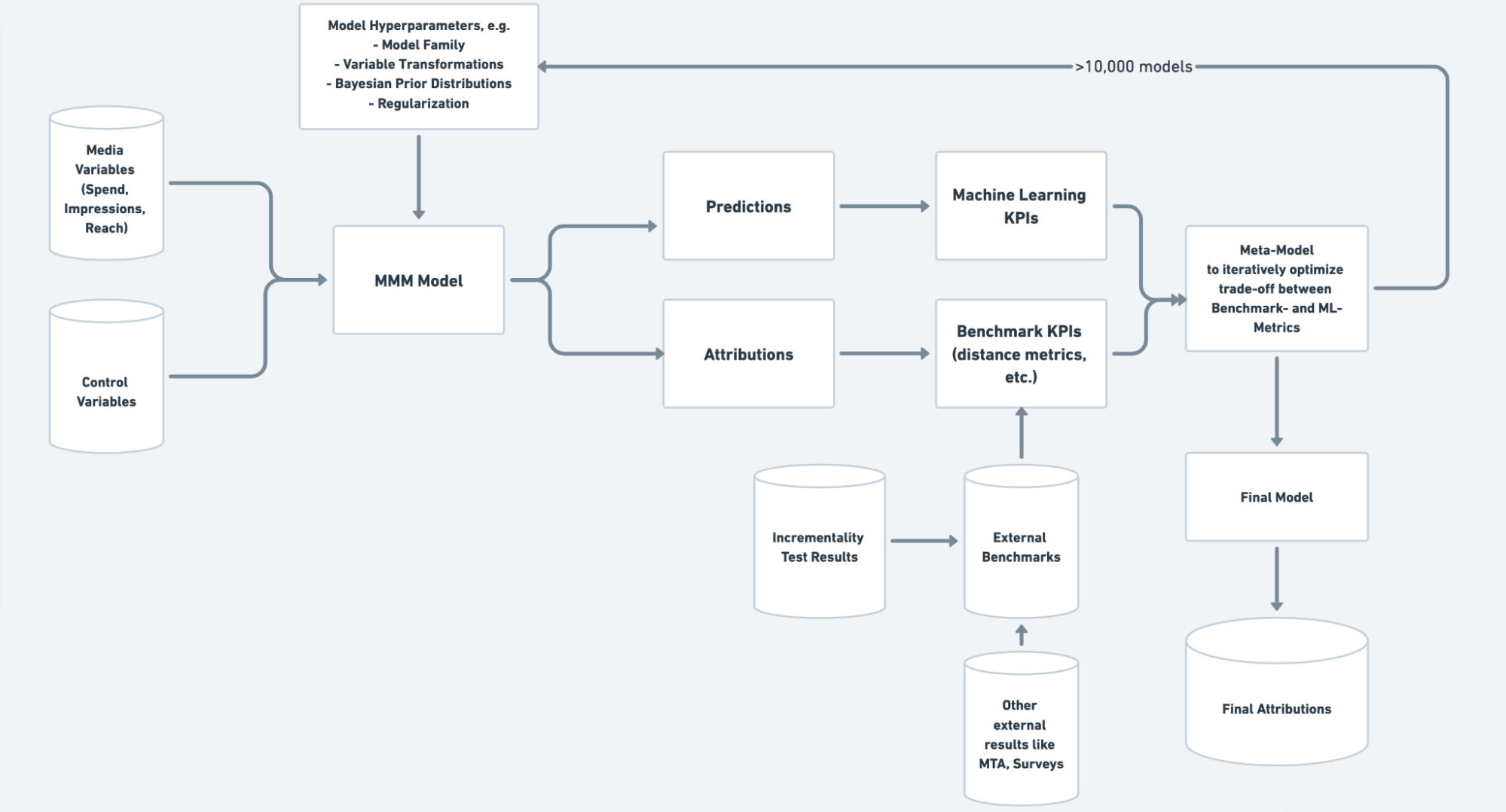

Anyscale and Ray remove several traditional AI/ML limitations; first it ensures we are not limited by any one algorithms or packages limitation, we can fit a) any parameter we want at scale, and b) include any secondary objective (next to model fit) to enable advanced calibration. With Anyscale and Ray we can find the pareto-optimal combination of hyperparameters (such as adstock, saturation, or specific hyperpriors) that optimizes the tradeoff between “machine learning accuracy” and “attribution accuracy”. This enables us to get closer to the true causal impact of media investments and helps our clients make better marketing decisions.

With the Anyscale Platform now also easily scale our models without requiring complex, costly infrastructure, iterate fast, run experiments and unify the developer experience from development to moving workloads to production. Given our otherwise complex decision surface, and our complex algorithms that can otherwise take several minutes to fit, we needed a solution that enabled us to run as many iterations as possible in a reasonable “wall clock” time. The Anyscale Platform and unique advantages of its capabilities and underlying Ray platform, provided us the perfect Platform to meet our AI/ML needs.

This is how our Ray / Anyscale Workflow currently looks like:

At each iteration, we try a different combination of hyperparameters (such as adstock, saturation-related parameters, which model to use, what (hyper-) priors to set…). Then we fit the model, calculate predictions and attributions, record the respective ML KPIs and secondary objectives (such as distance from the model’s attributions to an incrementality test). Then the meta-learner provided by Ray suggests a new set of parameters based on the success of the previous trial.

LinkResults and Benefits

With over 10,000 iterations to run, and iterations taking on average 60 seconds, without the Anyscale Platform it would cost us roughly a week per cycle to run these iterations sequentially. With the Anyscale Platform we have been able to reduce that down to under 3 hours. The impact and results we are experiencing enable us and our clients to benefit greatly from always-on Media Mix Modeling and greatly minimize time-to-insights.