Ray Summit

A Growing Ecosystem of Scalable ML Libraries on Ray

Wednesday, June 23 1:35 PM PDTAmog Kamsetty, Software Engineer, Anyscale

The open-source Python ML ecosystem has seen rapid growth over the recent years. As these libraries mature, there is an increased demand for distributed execution frameworks that allow programmers to handle large amounts of data and coordinate computational resources. In this talk, we discuss our experiences collaborating with the open source Python ML ecosystem as maintainers of Ray, a popular distributed execution framework. We will cover how distributed computing has shaped the way machine learning is done, and go through case studies on how three popular open source ML libraries (Horovod, HuggingFace transformers, and spaCy) benefit from Ray for distributed training.

Speakers

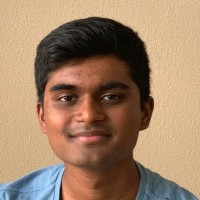

Amog Kamsetty

Software Engineer, Anyscale

Amog Kamsetty is a software engineer at Anyscale where he works on building distributed training libraries and integrations on top of Ray. He previously completed his MS degree at UC Berkeley working with Ion Stoica on machine learning for database systems.