Introducing the Anyscale Databricks Connector

Introducing the Anyscale Databricks connector. Simple Data Transfer and Efficient AI Workflows with Anyscale and Databricks.

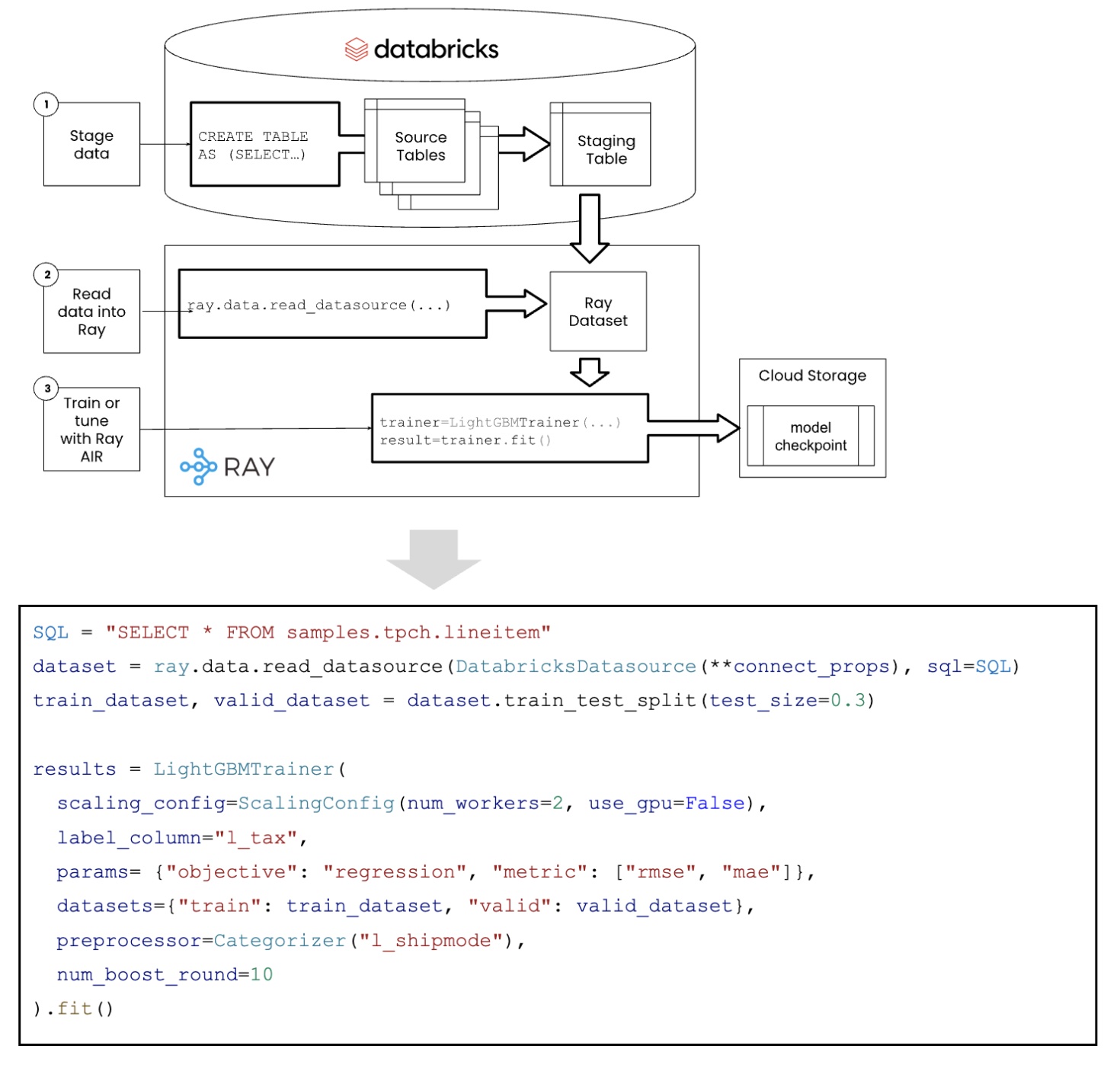

The Anyscale Databricks Connector is a new Ray Datasets capability that facilitates easy data transfer between Databricks clusters and Anyscale hosted Ray clusters. The connector makes it easy for Data Scientists using Anyscale to leverage their Databricks data lake during machine learning and discovery. It also enables a simpler way for machine learning engineers to create end-to-end workloads by allowing the entire ML pipeline to be executed within a single Python script. By taking advantage of the highly-scalable nature of Ray and Ray Datasets, machine learning workloads such as training, tuning and batch serving jobs can be executed more quickly and at lower cost, all while taking advantage of the latest advancements in AI through other Ray integrations with Hugging Face, XGBoost, LightGBM and many AI frameworks and libraries.

LinkKey Benefits

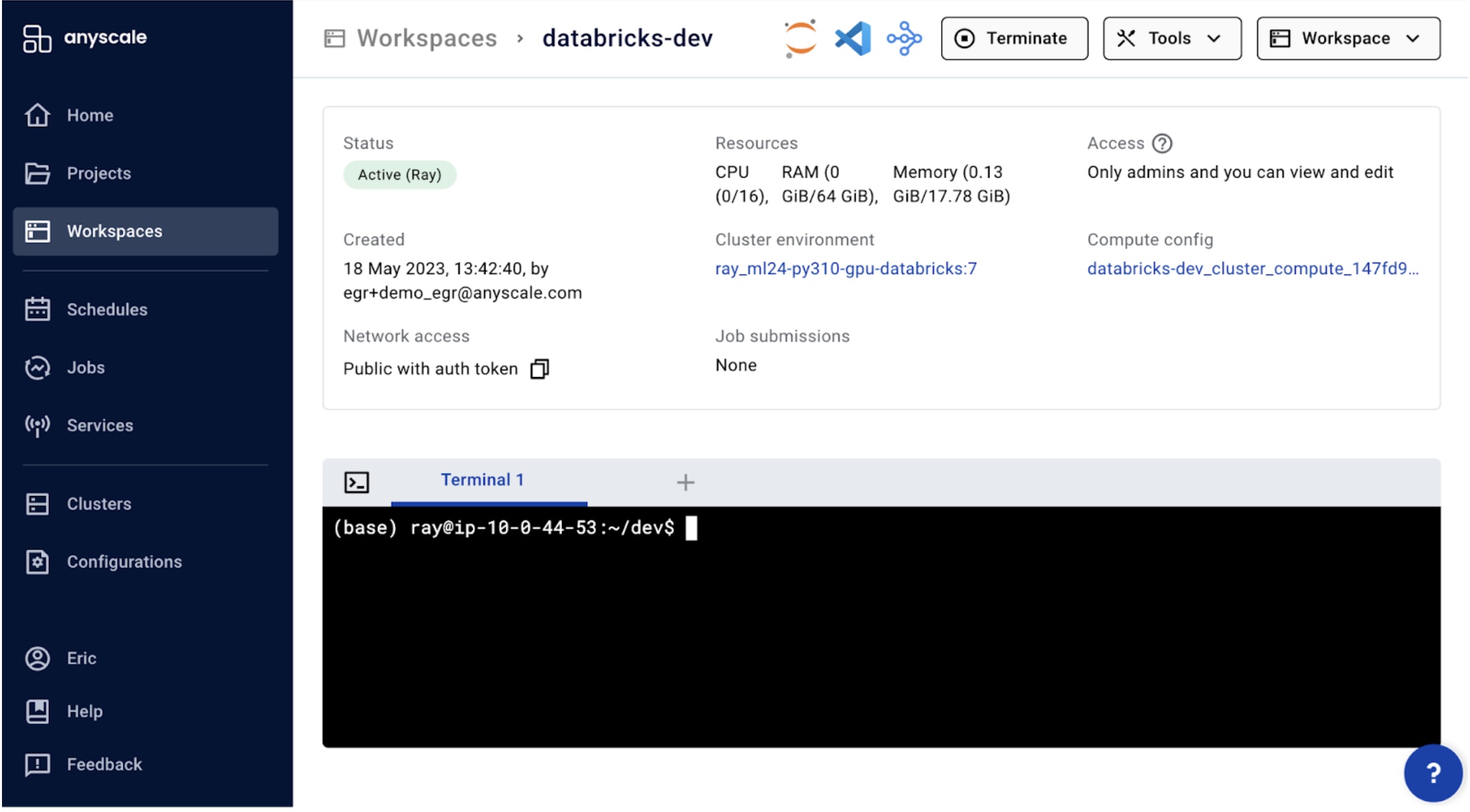

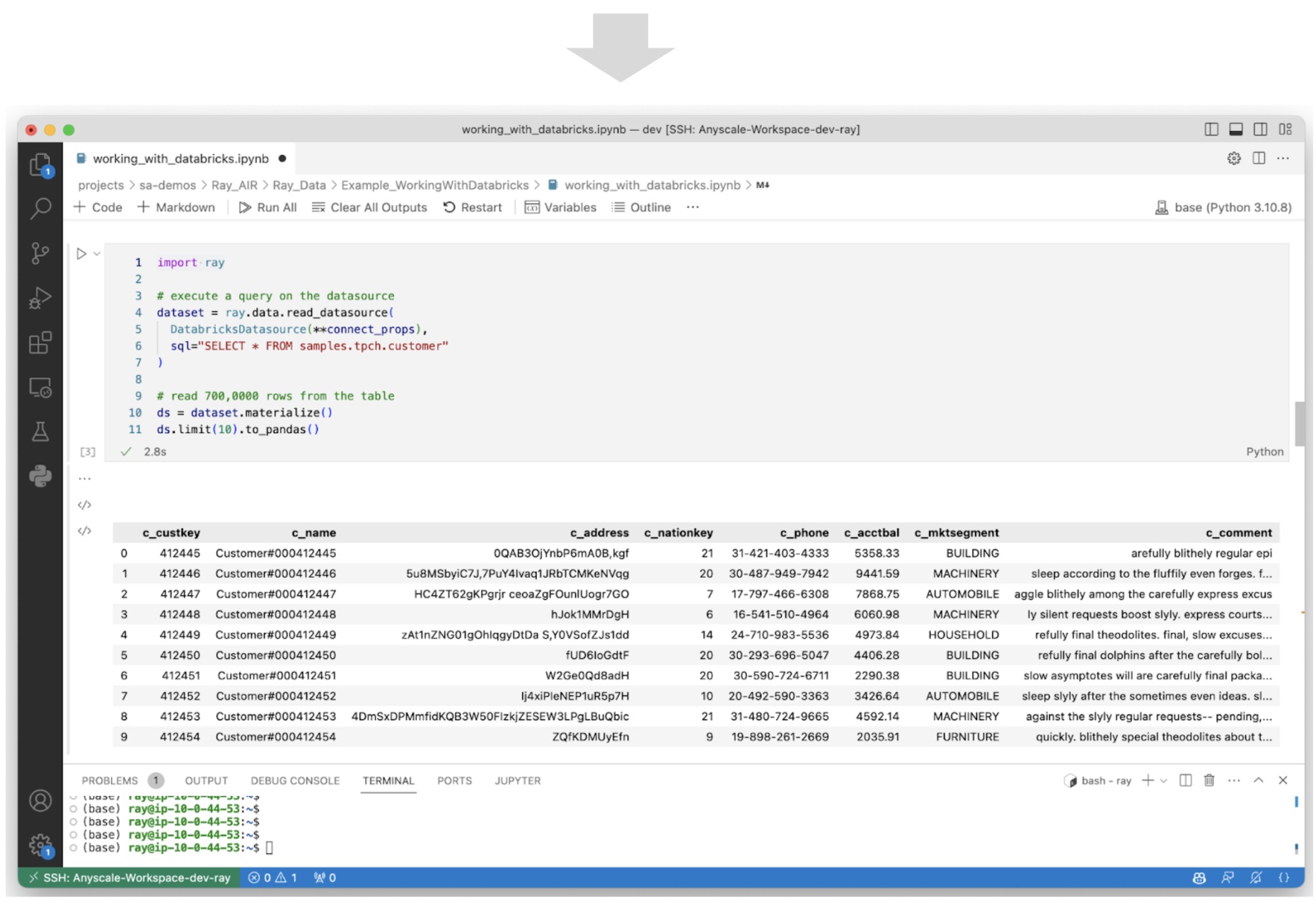

Simple access to data - Access Databricks data through SQL queries within Anyscale Workspaces Visual Code or JupyterHub environments, empowering data science discovery and development.

Improved data security and governance - Ensure data security and governance by directly copying data from Databricks data lakes to Ray clusters, eliminating the need for intermediate steps and maintaining separate controls over sensitive data. All data is encrypted in transit and at rest.

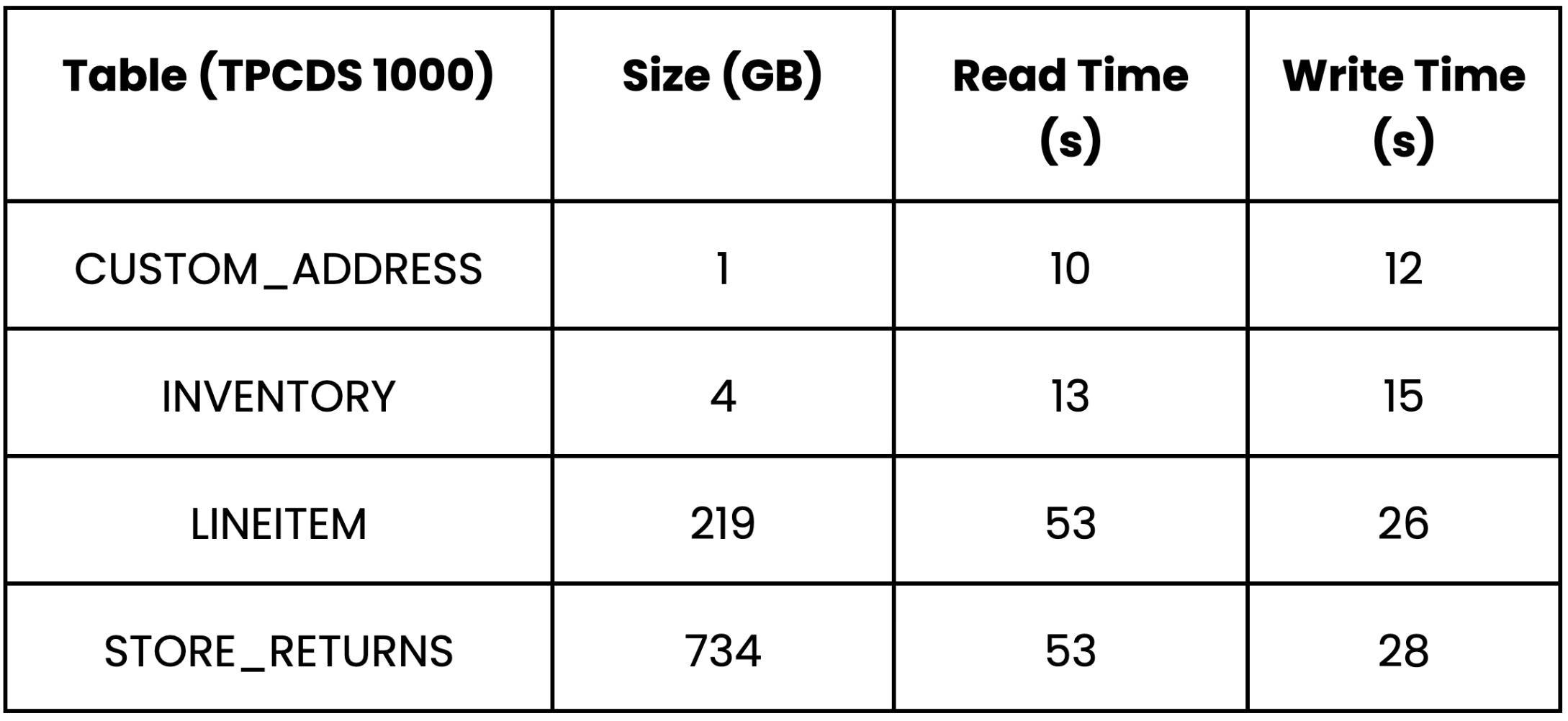

Highly-scalable data exchange - Take advantage of the parallel read and write capabilities of Ray Datasets, enabling the exchange of terabytes of data in minutes. This capability significantly speeds up data-intensive operations and enhances scalability while reducing job run times and overall costs.

Simplified workload development - Simplify machine learning workflows by consolidating all the necessary logic into a single script. This script can query features, train and tune models, and score and materialize results back into the data lake, streamlining the entire process.

Unlock the latest AI Innovations - Leverage the power of Databricks for querying and joining data, while benefiting from the scalability and simplicity of Ray AIR* for machine learning and AI development. Ray AIR* provides integration to the latest AI innovations such as pretrained Hugging Face language models.

LinkSimple, Secure and Scalable Data Access

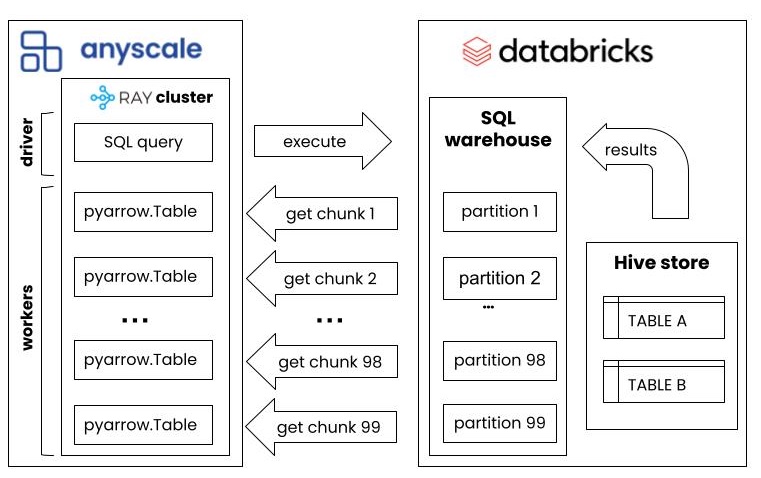

Using the Anyscale Databricks connector, large datasets can be queried from a Databricks SQL warehouse, and quickly transferred into a Ray Dataset distributed across the Ray cluster. The Databricks connector reads query results in chunks of data, in parallel across the Ray cluster. The size of the dataset and the speed at which it can be transferred scales based on the size of your Ray cluster and the number of simultaneous requests the underlying Databricks SQL warehouse supports.

With Anyscale Databricks connectors, parallel reads and writes and overall speed will scale with the size of the Ray cluster. The benchmarks for reading and writing demonstrate how the cluster scales out to support larger data sets.

LinkSimplified ML Development

Using the Databricks connector from within an Anyscale Workspaces, Data Scientists and Machine Learning Engineers have a unified experience while developing workloads. The data query, feature engineering, training, tuning and inference can all be executed within a single Python script that scales across a Ray cluster. Ray integrates with the latest AI and Machine learning libraries, enabling the most advanced ML workloads that work with 3rd party libs like Hugging Face, XGBoost, LightGBM, and most PyTorch and TensorFlow model architectures.

LinkUnlock new use cases with AI and ML

Anyscale and Ray integrate with most open source AI and ML libraries, enabling the latest innovation in AI to be applied to Databricks data. Ray makes working with Hugging face, XGBoost, LightGBM, TensorFlow and PyTorch and SciKit Learn a unified experience, with the added benefits of scaling with distributed training, tuning and serving.

A typical ML workload to train a LightGBM can be implemented in 20 lines of code.

LinkWant to see for yourself? Request a Trial today!

*We are sunsetting the "Ray AIR" concept and namespace starting with Ray 2.7. The changes follow the proposal outlined in this REP.

Table of contents

Sign up for product updates

Recommended content

Anyscale on Azure Enters Public Preview: Build and Deploy AI at Scale Inside Your Own Azure Tenant

Read more

Reimagining ML Operations with Agent Skills: a new maturity model for on-call

Read more