Latest Events

Webinar

Building a Multimodal Video Processing Pipeline with Ray

Thursday, May 28

8:30 AM PDT | 11:30 AM EDT | 5:30 PM CEST

8:30 AM PDT | 11:30 AM EDT | 5:30 PM CEST

Webinar

Multimodal data: Architecting pipelines that don’t break at scale

Thursday, May 14

8:30 AM PDT | 11:30 AM EDT | 5:30 PM CEST

8:30 AM PDT | 11:30 AM EDT | 5:30 PM CEST

Ray Meetup

Hands on Workshop: Scaling Vision-Language-Action (VLA) Models with Ray

Thursday, April 30

9 AM EDT

9 AM EDT

All Events

All Products / Libraries

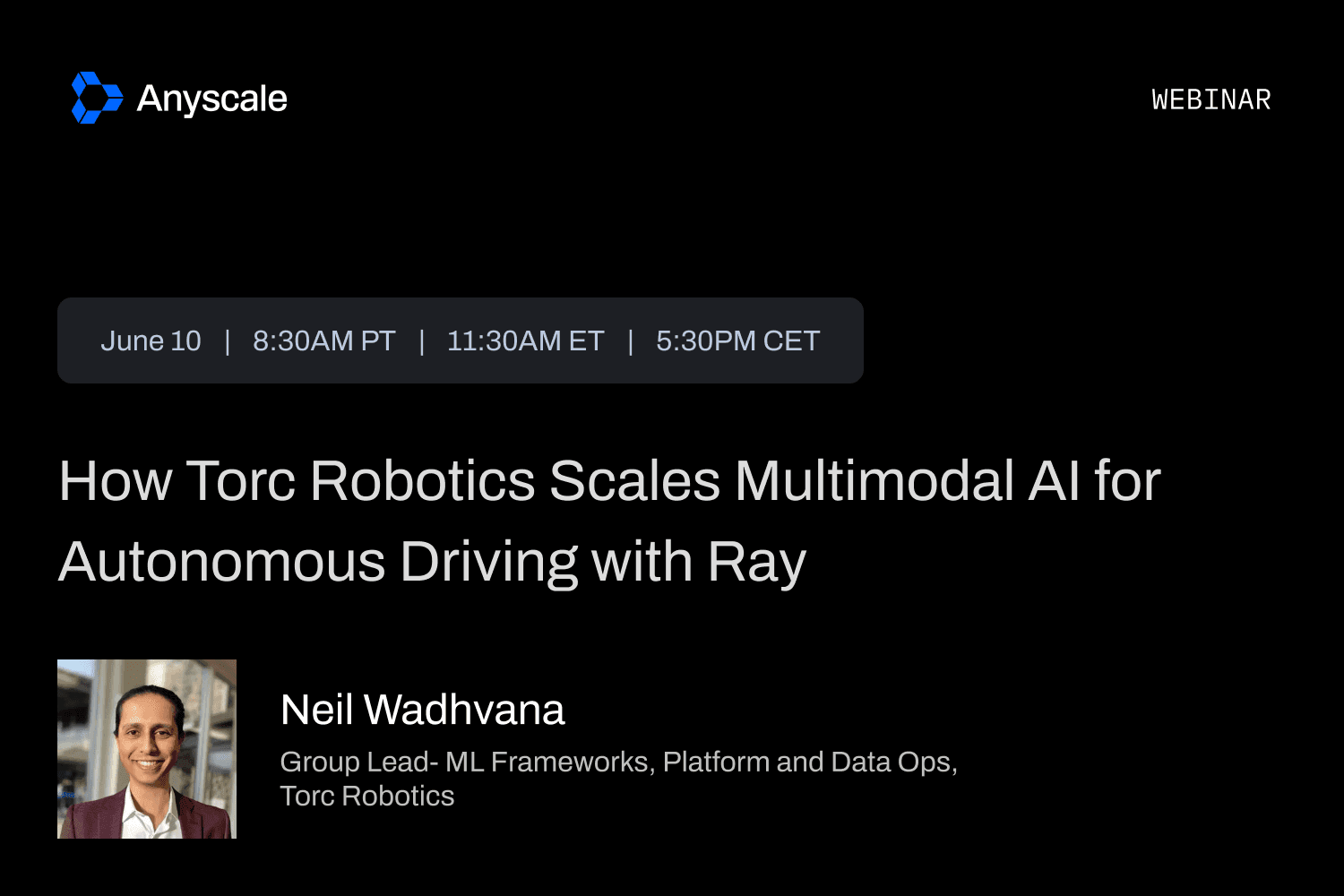

June 10, 2026

How Torc Robotics Scales Multimodal AI for Autonomous Driving with Ray

8:30 AM PDT | 11:30 AM EDT | 5:30 PM CEST

Ray RoadshowJune 2 - 3, 2026

Microsoft Build

Ray RoadshowJune 2, 2026

Ray Day London

9 AM CEST

WebinarMay 28, 2026

Building a Multimodal Video Processing Pipeline with Ray

8:30 AM PDT | 11:30 AM EDT | 5:30 PM CEST

Ray RoadshowMay 21, 2026

Ray Day NYC

9 AM EDT

Ray RoadshowMay 19, 2026

Ray Workshop: Boston

12 PM EDT

WebinarMay 14, 2026

Multimodal data: Architecting pipelines that don’t break at scale

8:30 AM PDT | 11:30 AM EDT | 5:30 PM CEST

Ray MeetupApril 30, 2026

Hands on Workshop: Scaling Vision-Language-Action (VLA) Models with Ray

9 AM EDT

Industry EventApril 22 - 24, 2026

Anyscale at Google NEXT '26

Ray MeetupMarch 31, 2026

Exa-Scale Search with Lance and Ray

6 PM PDT

Ray RoadshowMarch 31, 2026

Ray Day: Seattle

9 AM PDT

Ray MeetupMarch 17, 2026

AI Developer Meetup at NVIDIA GTC

7:30 PM PDT