How Notion cuts embedding costs by 80% and other stories on scaling AI with Ray from Salesforce, Uber, and more…

Starting Ray Day Seattle with an opening keynote

Starting Ray Day Seattle with an opening keynoteRay Day Seattle was the first stop on Anyscale's 2026 Ray on the Road, an 8-city, 3-country series aimed at raising awareness of Ray and bringing the community closer together for in-person events.

The event brought practitioners and Ray experts from Anyscale to The Westin Seattle for a full day of AI builder stories, hands-on workshops, and a technical roundtable discussing what it actually takes to run AI in production.

LinkFrom the community: Ray user talks

Ray User Talks speakers from left to right: Peng Zhang (Uber), Charlie Chen (Apple), Haocheng Bian (Apple), Mickey Liu (Notion), Chi Wang (Salesforce), Jiwei Cao (Salesforce)

Ray User Talks speakers from left to right: Peng Zhang (Uber), Charlie Chen (Apple), Haocheng Bian (Apple), Mickey Liu (Notion), Chi Wang (Salesforce), Jiwei Cao (Salesforce)Robert Nishihara, co-creator of Ray and co-founder of Anyscale, opened the day by laying out why the infrastructure problem in AI keeps getting harder due to growing volume of multimodal data processing, reinforcement learning and multi-node LLM inference workloads. Following the keynote, the heart of the morning was four back-to-back talks from teams running AI at scale using Ray.

Each one landed on the same core theme from a different angle: the existing tools ran out of road, and Ray was what made the next phase possible.

LinkFrom Spark on EMR to Ray: Notion's embedding pipeline overhaul

Presented by Mickey Liu, Software Engineer, Search Platform, Notion

Mickey walked through the migration from a 3-step Spark pipeline on EMR to a single Ray-powered job on Anyscale. What started as the engine behind a Q&A feature in 2023 now powers all of Notion AI and custom agents. The old setup relied on Spark for data chunking and writing to a vector store in addition to a third-pary API for embedding generation. This meant dealing with double compute costs, third-party API rate limits, and other painful developer experiences like debugging failures across tools due to driver and executor logs not persisted in YARN. The new pipeline streams Kafka into a Ray cluster that handles CPU chunking, GPU embedding generation, and vector store writes. An end-to-end pipeline in one engine with no intermediate S3 handoffs. The results: 80%+ cost reduction on embeddings, 10x query latency improvement, and 3 jobs per region collapsed into 1 - all with advanced workload observability available in the Anyscale platform.

Want to go deeper? Read Mickey and his team’s full writeup: Two Years of Vector Search at Notion: 10x Scale, 1/10th Cost

LinkHow Salesforce summarizes documents 200K tokens long with a P95 latency under 15 seconds

Presented by Chi Wang, Director of AI Platform, and Jiwei Cao, Lead Engineer, AI Cloud, Salesforce

Salesforce AI Cloud handles document enrichment with AI at enterprise scale: take a long document, run it through a model, and return a concise summary. Simple in concept, but punishing in practice. Their production summarization pipeline processes documents whose content can run up to 200K tokens, roughly the length of a short novel. Using a 20B parameter Mixture of Experts model, their service must return results with a P95 latency under 15 seconds, meaning 95% of requests complete within that window.

The team used Ray to chunk long documents and summarize them in parallel across a distributed actor pool running vLLM, then merge the results into a single final output. The team ran scaling experiments across GPU configurations directly on Ray, landing on data parallelism across 1-2 GPUs as the best latency-throughput tradeoff. Ray’s ability to keep data processing, model inference, and application logic in one unified environment reduced the time from POC to production significantly.

For a deeper look at how Ray Data and vLLM combine for large-scale batch inference, check out: Ray Data LLM Enables 2x Throughput Over vLLM's Synchronous Engine.

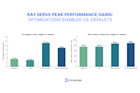

LinkHow Uber improved GPU utilization by 20% and cut training time in half with Ray

Presented by Peng Zhang, Engineering Manager, Uber

Peng traced the evolution of Uber's Michelangelo ML platform, which powers Uber Rides and Uber Eats. Their stack moved from TensorFlow/Horovod, to Ray alongside PyTorch, to DeepSpeed and model parallelism for 1B-10B+ parameter models today. Two Ray investments stood out: a heterogeneous cluster design separating CPU data-loading nodes from GPU training nodes (improving GPU utilization by up to 20% and cutting training time by around 50% in select pipelines), and a Native Transform initiative replacing Spark-based feature transformers with Ray Data and PyTorch, halving transform time. Open challenges around cluster stability and OOM failures remain, with Uber actively experimenting with Anyscale Runtime.

Read more on how Uber uses Ray across their business: How Uber Uses Ray to Optimize the Rides Business.

LinkScaling Foundation Model training across distributed infrastructure with Ray

Presented by Charlie Chen, Senior ML Engineer, and Haocheng Bian, Principal ML Engineer, Apple

Charlie walked through the unique demands of foundation model training: massive unstructured data, billion-plus parameter counts, and sparse architectures like Mixture of Experts (MoE). Two problems make this hard with traditional processing engines: data pipelines and training frameworks run in separate environments with no shared context, and no single GPU can fit the memory footprint of these workloads. Ray solves both by providing a unified Python environment across data processing, training, and inference, with distributed workers handling parallelism across many machines. Charlie also covered how Ray fits multi-model RL workflows like PPO and GRPO, and highlighted RLlib for built-in RL support.

Curious about the open-source RL ecosystem powering this kind of work? Check out: Open Source RL Libraries for LLMs.

LinkGetting Hands-On and Looking Ahead

Suman Debnath leading the Ray workshop

Suman Debnath leading the Ray workshopIn the afternoon, Suman Debnath, Technical Lead for ML, and Rahul Samir Mehta, Manager, Member of Technical Staff, both from Anyscale, led a workshop for developers and ML engineers new to Ray or looking to deepen their skills. Structured across four progressive modules, the session moved from Ray Core fundamentals (tasks, actors, object store) to scalable data pipelines with Ray Data, distributed training with FSDP and Ray Train, and production model serving with Ray Serve. Each module included extra notebooks for self-study, giving attendees material to keep building with after the session.

LinkNext Stops

Seattle was just the beginning. Ray on the Road is designed to bring this community together in the cities where the work is happening, so practitioners can learn from each other and help shape what Ray and Anyscale build next.

The Ray on the Road series continues across a few more cities:

In addition at each stop, a select group of customers also joins an invite-only roundtable hosted by Anyscale's field team for an early look at the Anyscale product roadmap for the coming quarters. If you are interested in joining a future roundtable, reach out to your Anyscale contact.