Major upgrades to Ray Serve: Online Inference with 88% lower latency and 11.1x higher throughput

LinkIntroduction

Ray Serve makes it easy to create scalable, composable AI applications in Python, backed by the power and performance of Ray Core for actor management and resource placement.

Ray Serve is popular for development, iteration and deployment of AI applications such as LLM services. However, some users who have latency-sensitive requirements have had issues with Ray Serve at scale. In partnership with Google Kubernetes Engine (GKE), we have identified several areas where Ray Serve struggles to maintain peak performance requirements as demand scales.

Today, we’re announcing major improvements to the Ray Serve framework that make Ray Serve even more performant and unlock new SLAs for latency and throughput. The key features are:

HAProxy ingress, a highly-optimized, battle-tested open-source load balancer written in C

Direct gRPC data-plane communications between Ray Serve replicas

By enabling these features, we achieve throughput and latency improvements on example applications such as recommendation systems and LLM inference at scale. We believe this is a critical improvement in enabling Ray Serve to be the standard framework for developing, testing, and deploying AI applications at production scale.

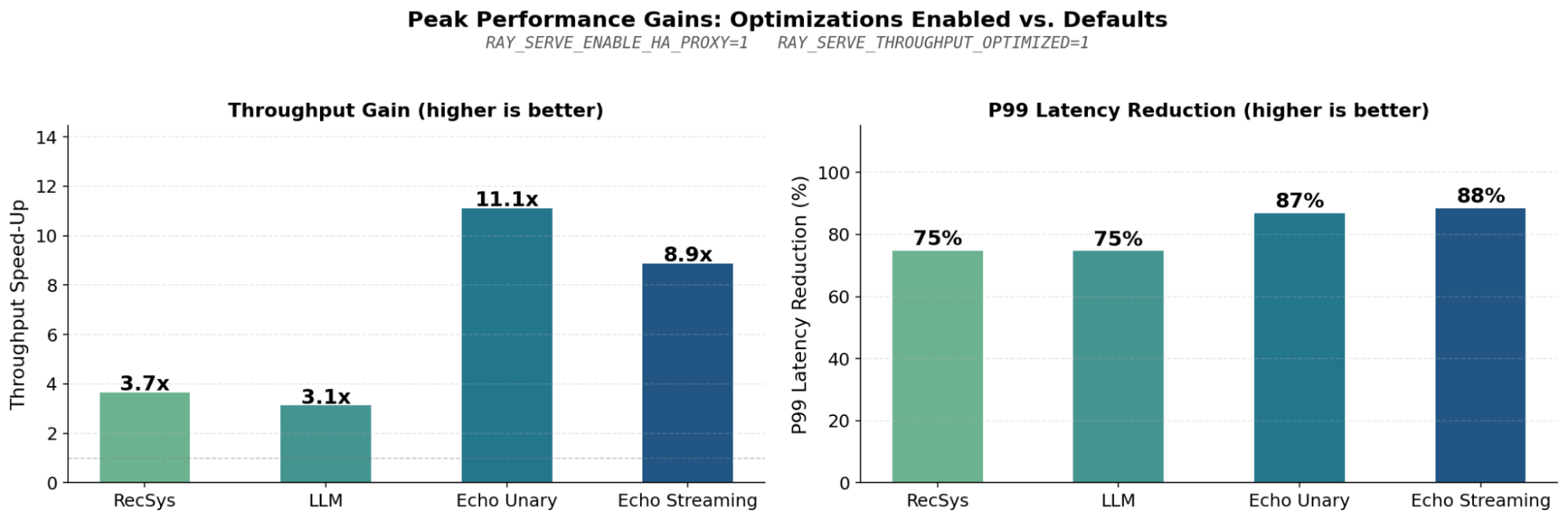

Figure (1): This figure shows the relative performance gains between Ray Serve with optimizations enabled and Ray Serve with current default settings. The “RecSys” and “LLM” results are covered in the Case Study sections. The “Echo Unary” and “Echo Streaming” results come from the last plot in the Deep Dive section, showing the combined effects of all optimizations. The Ray Serve application code chains two no-op deployments for the unary case, and streams back random bytes through two deployments in the streaming case.

Figure (1): This figure shows the relative performance gains between Ray Serve with optimizations enabled and Ray Serve with current default settings. The “RecSys” and “LLM” results are covered in the Case Study sections. The “Echo Unary” and “Echo Streaming” results come from the last plot in the Deep Dive section, showing the combined effects of all optimizations. The Ray Serve application code chains two no-op deployments for the unary case, and streams back random bytes through two deployments in the streaming case.Both optimizations will be available starting in Ray 2.55+ and are enabled via environment variables at cluster initialization. See the Ray Serve performance tuning docs for usage guides.

In this post we cover:

End-to-end results on recommendation system and LLM inference use cases

Technical deep-dives and ablations breaking down each optimization that contributed to these improvements

LinkCase Study: Recommendation Systems

Recommendation systems are a natural fit for Ray Serve's model composition pattern: heterogeneous compute composed into a single application spanning CPU and GPU nodes. This is precisely the workload where interdeployment overhead compounds.

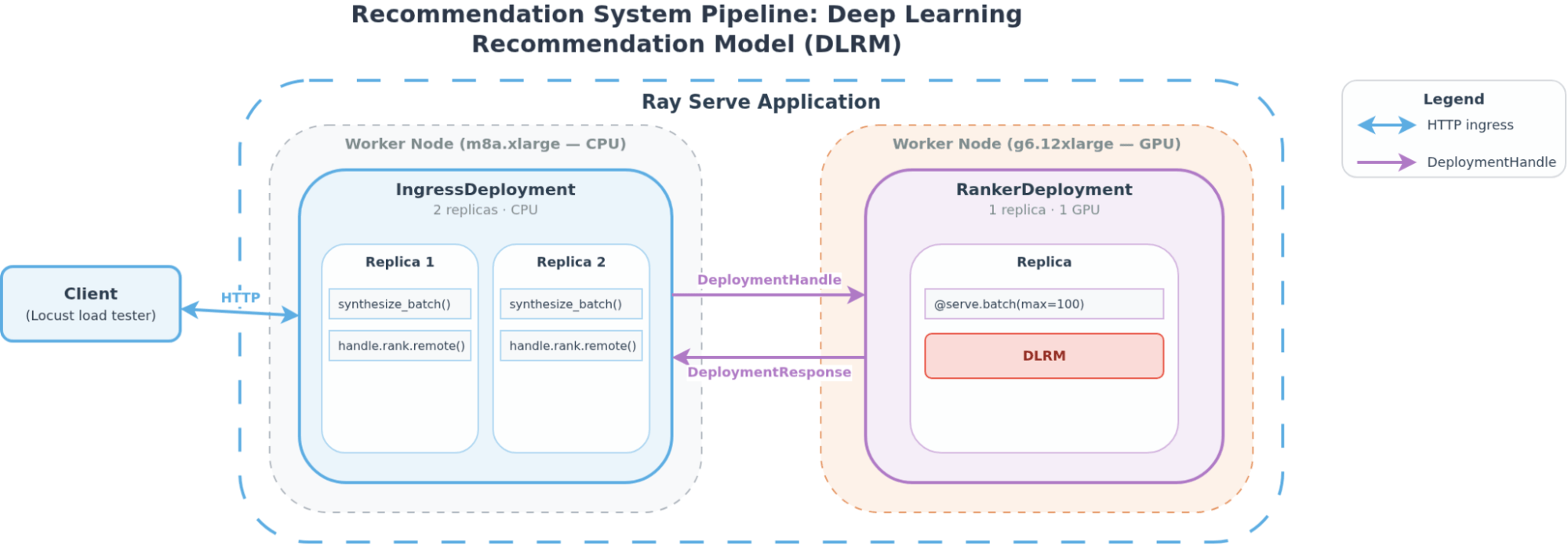

We benchmarked a deep learning recommendation model (DLRM) pipeline with two composed deployments. Figure (2) shows the architecture diagram of the system. It consists of:

An ingress deployment that receives HTTP requests, synthesizes a batch of 32 candidate items, and calls the ranker deployment.

A ranker deployment that batches up to 100 concurrent requests (using

@serve.batch), runs a neural network (MLP + embedding lookups, dot product, MLP) and returns scores.

Figure (2): The architecture of the recommendation system pipeline as a Ray Serve application. Client requests are routed to ingress deployment replicas and synthesize a candidate batch. Then, the ingress deployment sends the candidate batch to the ranker deployment, which runs a 167M parameter DLRM.

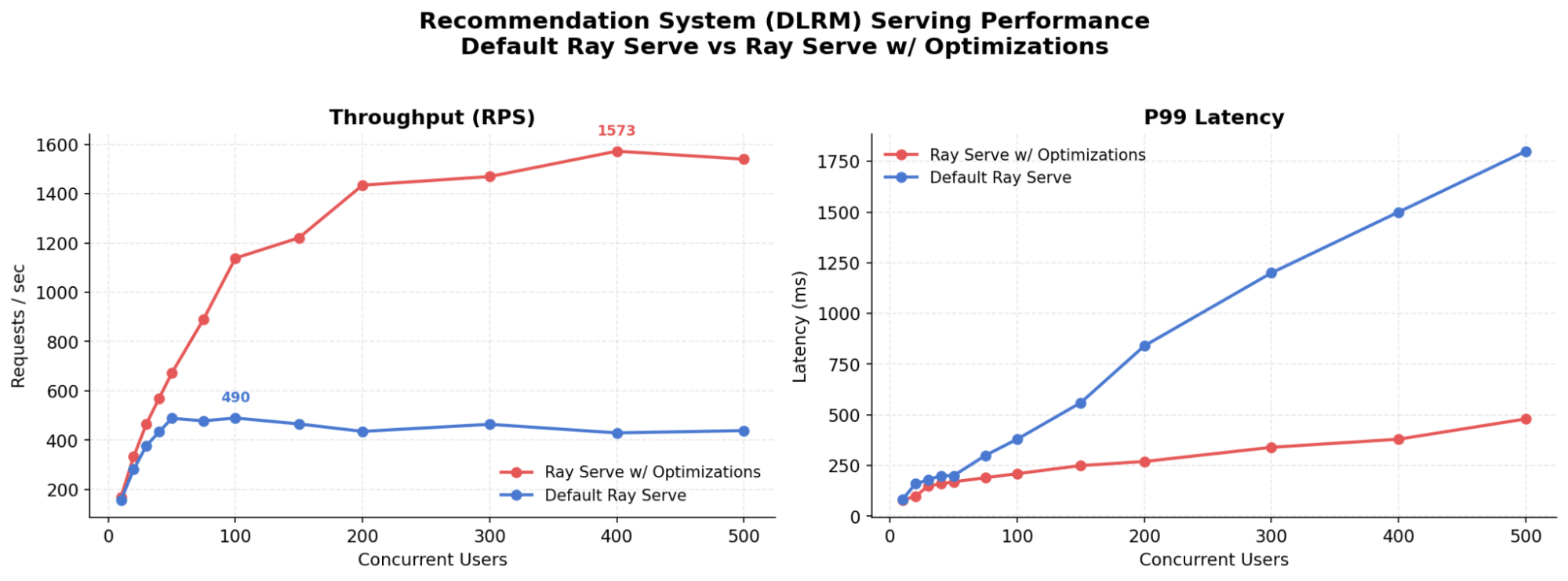

Figure (2): The architecture of the recommendation system pipeline as a Ray Serve application. Client requests are routed to ingress deployment replicas and synthesize a candidate batch. Then, the ingress deployment sends the candidate batch to the ranker deployment, which runs a 167M parameter DLRM.To benchmark its performance, we swept the number of concurrent users, measuring latency and throughput from the client side. As shown in Figure (3), with these optimizations enabled, the system can scale to higher maximum RPS at lower latency SLAs. At 100 users, optimized throughput is already more than double the baseline, with 25% lower P99 latency. At 400 users, the gap widens further, as Ray Serve’s default proxy saturates compared to HAProxy.

Figure (3): Enabling HAProxy and other throughput optimization flags can unlock a new SLA frontier for recommendation system use cases, where the number of concurrent users determines the economics of serving these models.

Figure (3): Enabling HAProxy and other throughput optimization flags can unlock a new SLA frontier for recommendation system use cases, where the number of concurrent users determines the economics of serving these models.LinkCase Study: LLM Inference

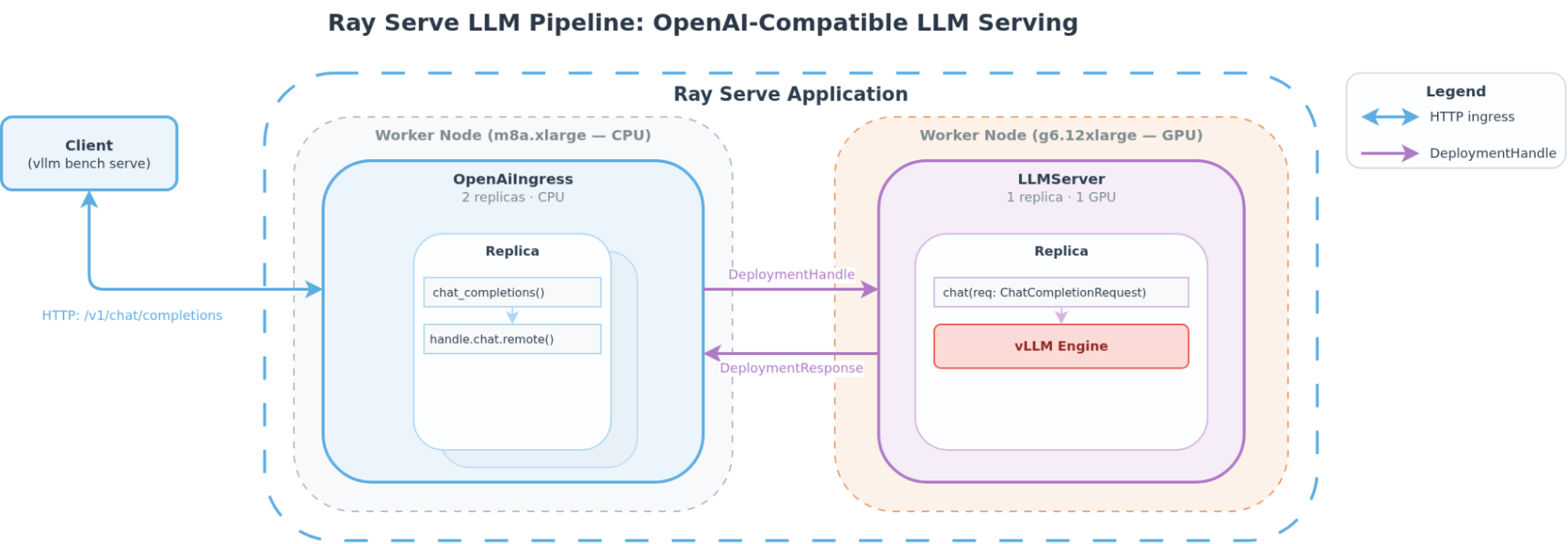

Ray Serve LLM is an optimized implementation of Ray Serve + vLLM geared towards online high throughput/low latency LLM inference. Figure (4) shows the architecture diagram of a basic Ray Serve LLM pipeline. It is composed of two deployments:

OpenAiIngress, which is responsible for mapping the OpenAI protocol to a specific model deployment based on the model IDThe

LLMServerdeployment, which is responsible for scaling vLLM engine replicas.

Figure (4): Architecture diagram of a basic Ray Serve LLM application. Client requests are directed to the OpenAiIngress deployment first and are mapped to a target LLMServer deployment based on model_id. Then, requests are routed through the deployment handle to the “least loaded” replica of the target LLMServer deployment. Finally, requests are batched into an async vLLM engine, and tokens are streamed back to the client.

Figure (4): Architecture diagram of a basic Ray Serve LLM application. Client requests are directed to the OpenAiIngress deployment first and are mapped to a target LLMServer deployment based on model_id. Then, requests are routed through the deployment handle to the “least loaded” replica of the target LLMServer deployment. Finally, requests are batched into an async vLLM engine, and tokens are streamed back to the client.

See here for more details about Ray Serve LLM architecture.

We analyzed the effectiveness of recent optimizations in Ray Serve to answer the following:

As we scale the number of vLLM replicas, does throughput scale linearly per node?

LinkScaling Number of Replicas per Node

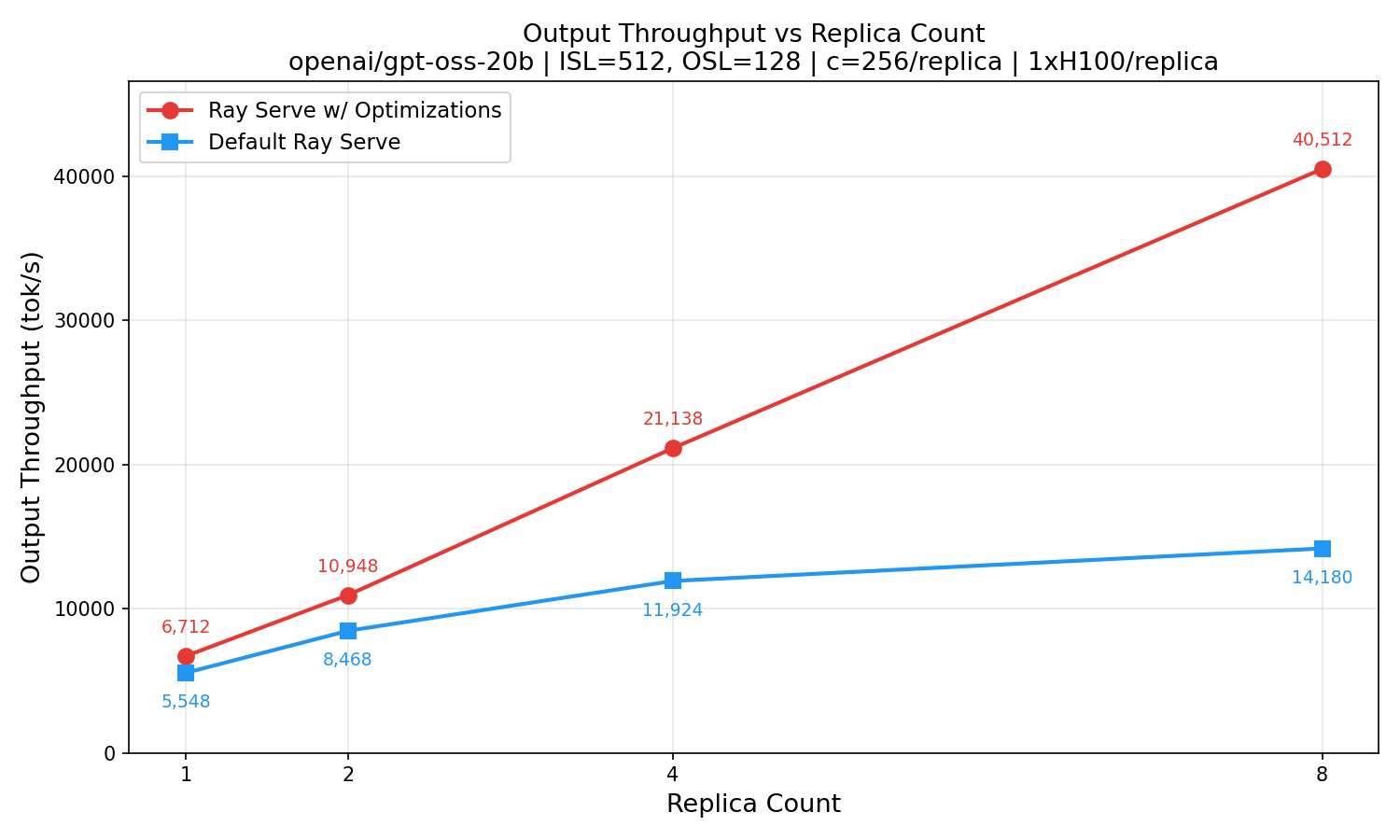

We conducted this experiment by sweeping the number of vLLM replicas serving openai/gpt-oss-20b, TP1 on a single H100 GPU per replica at concurrency 256 users per replica. Client workload is random, input sequence length (ISL) 512 tokens, output sequence length (OSL) 128 tokens. Using the default Ray Serve Proxy, adding additional replicas to the node yields diminishing returns as the Python process saturates. With HAProxy’s higher throughput capability, throughput scales linearly, as seen in Figure (5), while the default proxy plateaus.

Figure (5): With the new optimizations in Ray Serve we can scale LLM inference horizontally, gaining more throughput per node at scale. Without the optimizations to the ingress and gRPC, the scaling is sublinear.

Figure (5): With the new optimizations in Ray Serve we can scale LLM inference horizontally, gaining more throughput per node at scale. Without the optimizations to the ingress and gRPC, the scaling is sublinear. LinkDeep Dives

In these sections, we will go into greater detail on the contribution of each optimization to the performance of Ray Serve.

LinkBenchmarking Setup

For these benchmarks, we conduct unary and streaming benchmarks on a no-op, “echo” application, where the deployment simply sends or streams a pre-determined payload back to the client.

Here, “unary” means an RPC call that takes one request message as input, and returns a single response message as output. “Streaming” refers to “server-side streaming” in gRPC, where one request yields multiple response messages. In both unary and streaming experiments, the Ray Serve application is composed of two deployments in series: an ingress deployment and a child deployment.

When a client request reaches the ingress deployment, the ingress deployment calls a remote function on the child deployment. In the unary experiments, the child deployment’s remote function returns an empty string, and the ingress deployment returns an empty HTTP response to the client. In the streaming experiments, the child deployment returns ChatCompletionResponse SSE chunks.

LinkOptimizing Interdeployment Communication

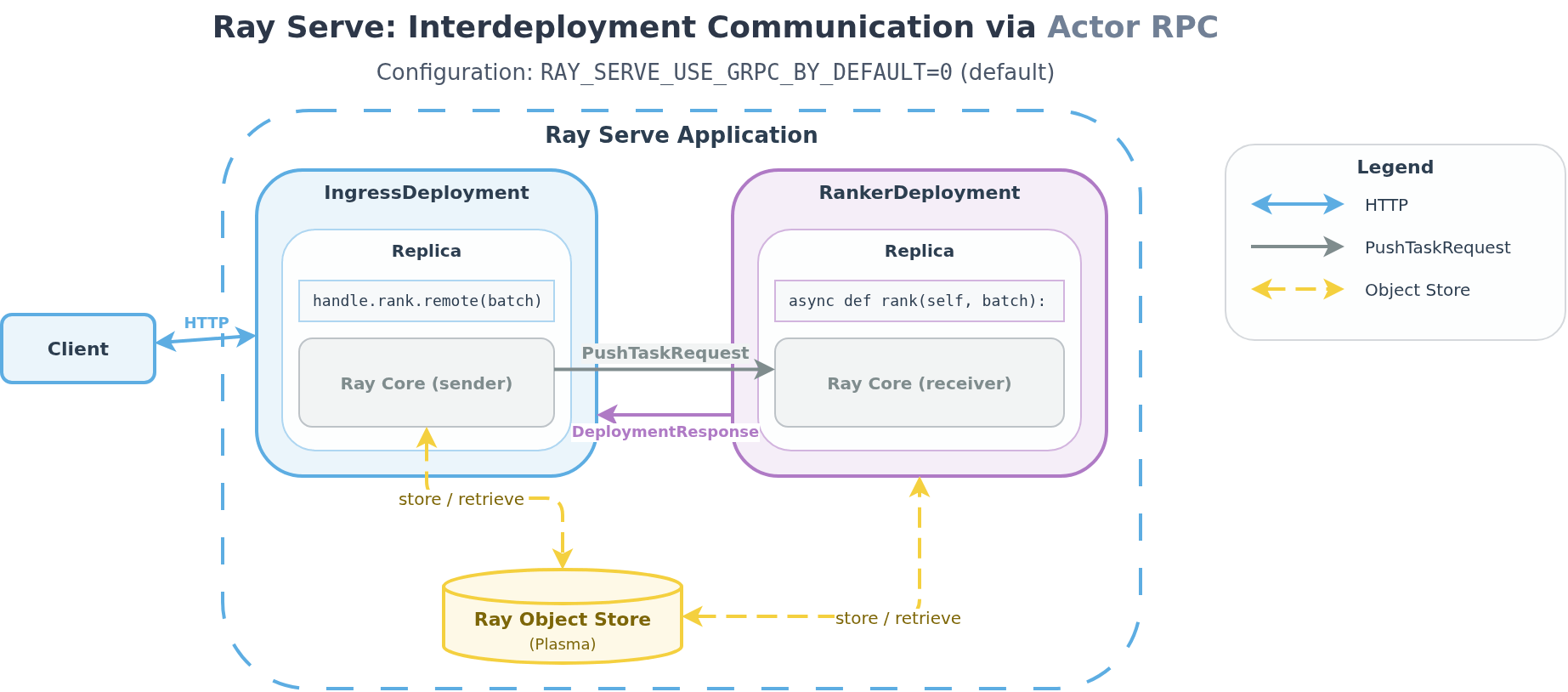

By default, when one deployment calls another deployment via its handle, Ray Serve dispatches this as a Ray Core actor task. For small payloads (<100 KB), arguments are serialized and included directly in the PushTaskRequest message. For larger payloads, arguments are serialized, stored in the Object Store (Ray's shared-memory store), and passed by reference. Figure (6) shows the path that deployment payloads would take by default in Ray Serve.

Figure (6): Ray Serve uses standard Actor Task machinery, including PushTaskRequest, to perform RPC by default. In this case, small payloads (<100 KB) are passed through PushTaskRequest task, while bigger payloads are transported via Ray’s object store.

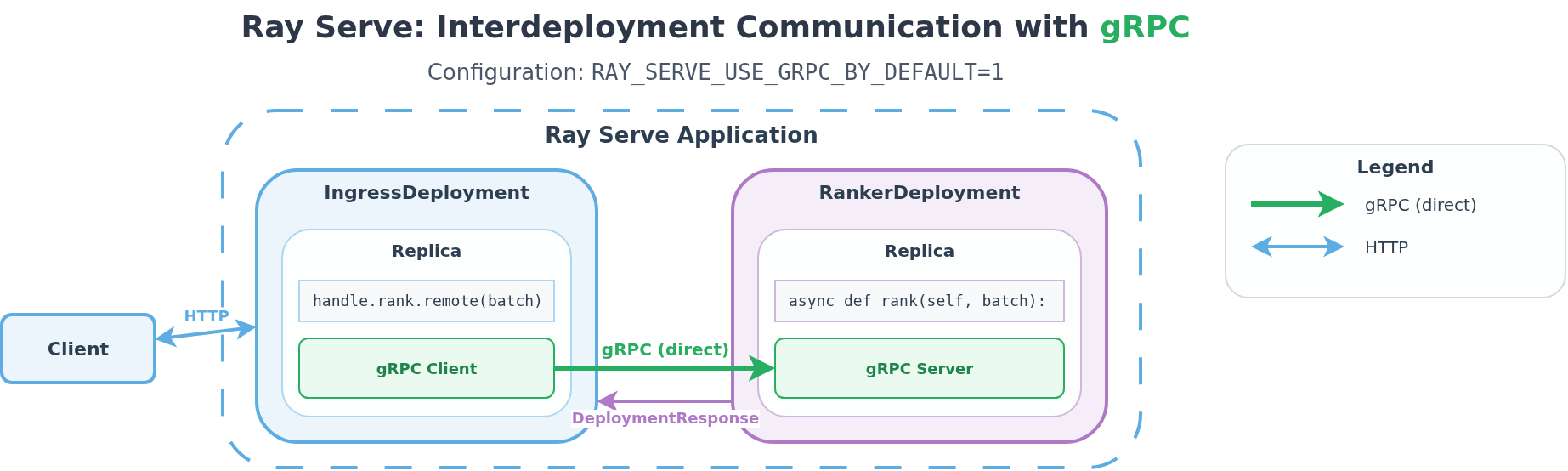

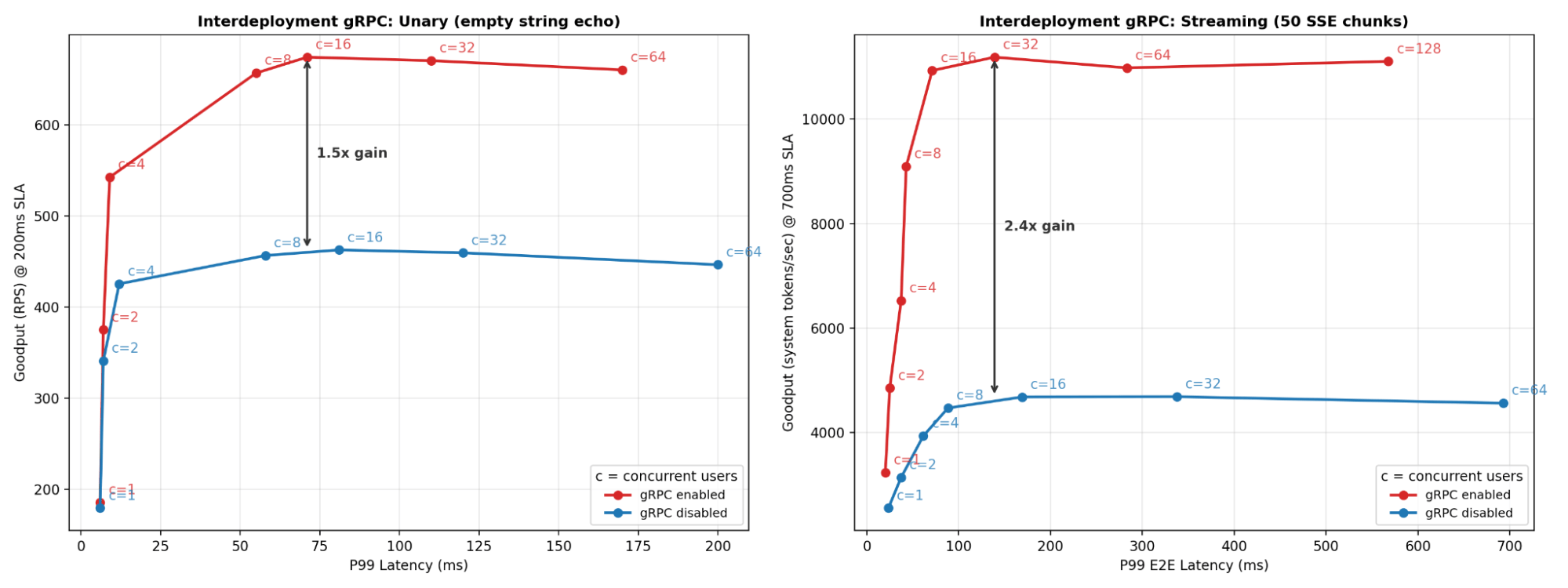

Figure (6): Ray Serve uses standard Actor Task machinery, including PushTaskRequest, to perform RPC by default. In this case, small payloads (<100 KB) are passed through PushTaskRequest task, while bigger payloads are transported via Ray’s object store. The Ray Core dataplane supports ObjectRefs, reference counting, and lineage reconstruction, which are useful for large objects and orchestrating complex patterns. However, following the rule of least power, Ray Serve now supports direct, point-to-point gRPC for interdeployment communication, bypassing Ray Core. With interdeployment gRPC enabled, Ray Serve establishes direct gRPC channels between replica actors, as illustrated in Figure (7). Arguments are serialized with protobuf and sent point-to-point over the network. The performance benefit is seen in Figure (8). At the same latency SLA, throughput increases by 1.5x in the unary case, and 2.4x in the streaming case.

Figure (7): With interdeployment gRPC, Ray Serve replicas send RPC data directly to each other's gRPC servers. This optimization can reduce the overhead of communication between deployment replicas.

Figure (7): With interdeployment gRPC, Ray Serve replicas send RPC data directly to each other's gRPC servers. This optimization can reduce the overhead of communication between deployment replicas.With gRPC-based interdeployment communication, Ray Serve uses Ray Core primarily for actor placement, cluster orchestration, metrics propagation, and health checks. User request traffic, however, flows directly over gRPC between deployments and does not go through Ray Core.

Environment variable RAY_SERVE_USE_GRPC_BY_DEFAULT controls whether gRPC is used by default. In addition, Ray Serve exposes handle options that give users fine-grained control over the transport method for each deployment handle. For example: in an application with mostly large interdeployment payloads, developers can use Ray Core transport by default, and opt in to gRPC for deployments with small payloads. See the performance tuning docs for more details.

Figure (8): Performance of unary and streaming micro benchmarks comparing the effect of RAY_SERVE_USE_GRPC_BY_DEFAULT on an application composed of two chained deployments, each with a single replica. We observe up to 1.5x throughput in the unary case with a no-op payload, as well as 2.4x throughput in the streaming case with 50 SSE chunks.

Figure (8): Performance of unary and streaming micro benchmarks comparing the effect of RAY_SERVE_USE_GRPC_BY_DEFAULT on an application composed of two chained deployments, each with a single replica. We observe up to 1.5x throughput in the unary case with a no-op payload, as well as 2.4x throughput in the streaming case with 50 SSE chunks.LinkOptimizing Ingress Overhead

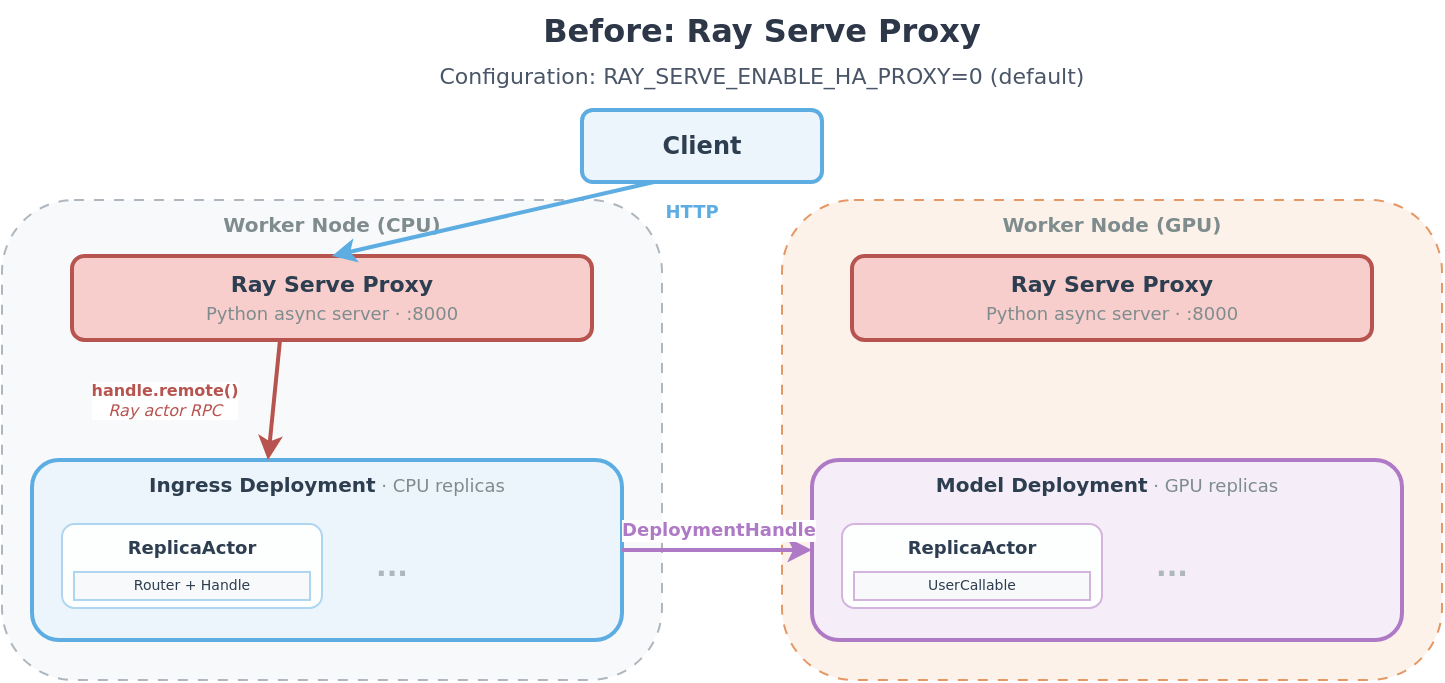

The built-in Ray Serve Proxy is a Python-based async server (based on Uvicorn and Starlette) that accepts client connections, routes requests to the appropriate deployment via handle.remote() and streams responses back. It becomes a bottleneck at high concurrency, inflating per-request latency and capping throughput independent of the total number of healthy replicas. Figure (9) shows the architecture of Ray Serve’s proxy layer when HAProxy is disabled.

Figure (9): Ray Serve Proxy terminates HTTP connections at port 8000 and passes the request content to the ingress deployment in the Ray Serve app using a Ray remote call.

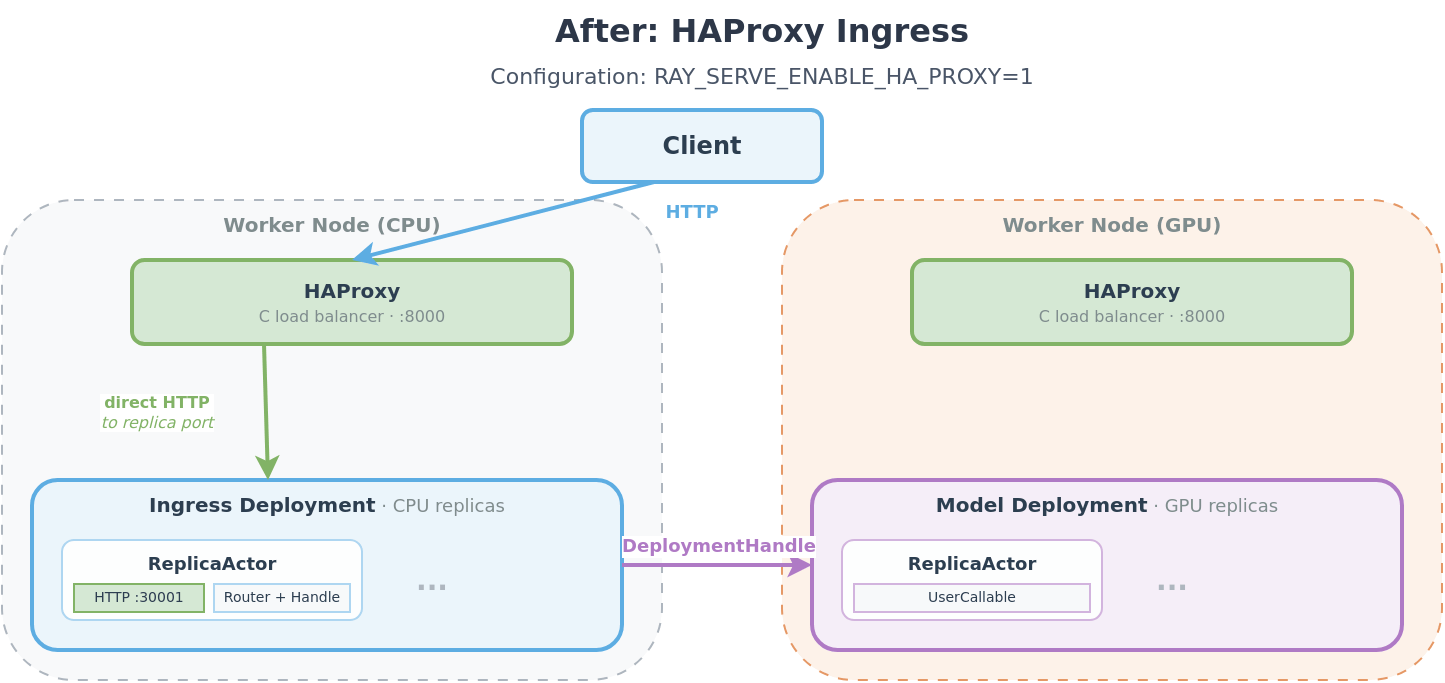

Figure (9): Ray Serve Proxy terminates HTTP connections at port 8000 and passes the request content to the ingress deployment in the Ray Serve app using a Ray remote call. Figure (10): Architecture diagram showing the Ray Serve framework after replacing the Ray Serve Proxy with HAProxy. Ingress deployments now contain HTTP servers with ports in the :30000 range, to which HAProxy routes incoming requests.

Figure (10): Architecture diagram showing the Ray Serve framework after replacing the Ray Serve Proxy with HAProxy. Ingress deployments now contain HTTP servers with ports in the :30000 range, to which HAProxy routes incoming requests.

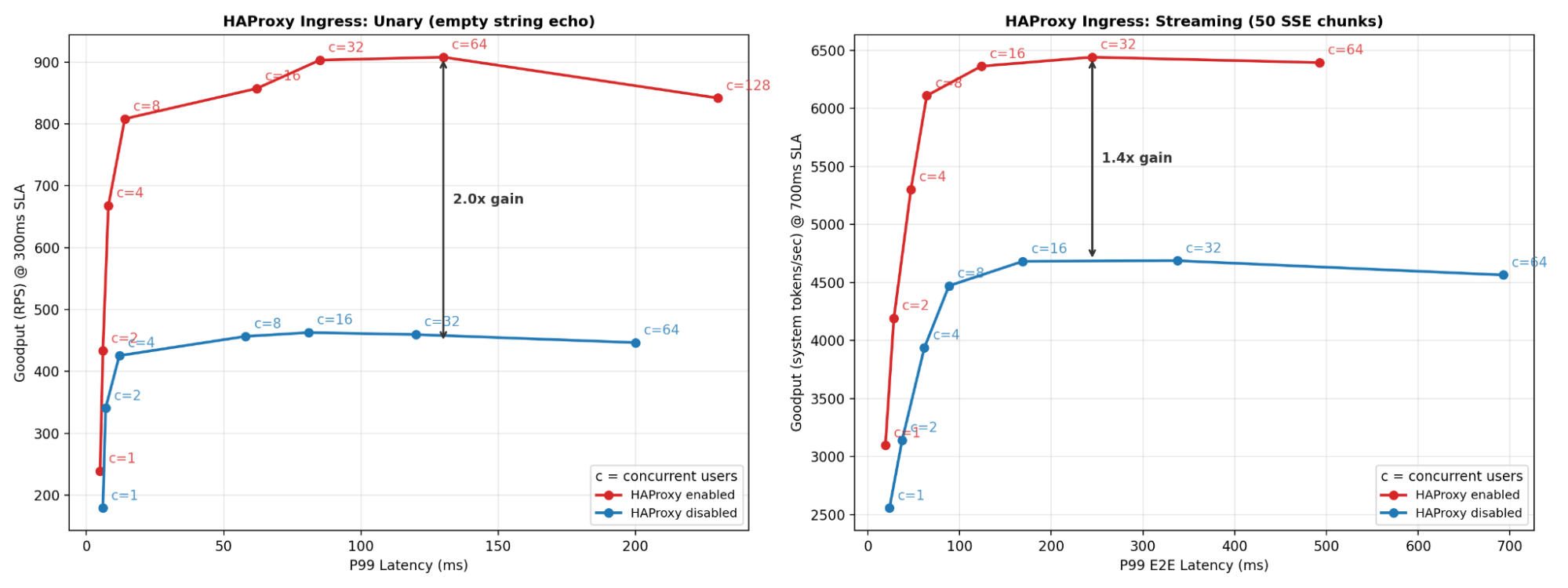

When HAProxy is enabled, as seen in Figure (10), each ingress deployment replica starts an HTTP server internally. HAProxy is notified of these servers by the ServeController, and can directly route requests to them. HAProxy is a C-based HTTP reverse proxy commonly used in production systems at high scale. The performance benefit is seen in Figure (11). At the same latency SLA, throughput increases by 2x in the unary case, and 1.4x for streaming.

Figure (11): Performance of Unary and Streaming micro benchmarks comparing the effect of RAY_SERVE_ENABLE_HA_PROXY on an application composed of two chained deployments, each with a single replica. We observe up to 2x throughput in the unary case with a no-op payload, as well as 1.4x throughput in the streaming case with 50 SSE chunks.

Figure (11): Performance of Unary and Streaming micro benchmarks comparing the effect of RAY_SERVE_ENABLE_HA_PROXY on an application composed of two chained deployments, each with a single replica. We observe up to 2x throughput in the unary case with a no-op payload, as well as 1.4x throughput in the streaming case with 50 SSE chunks.LinkSingle Event Loop

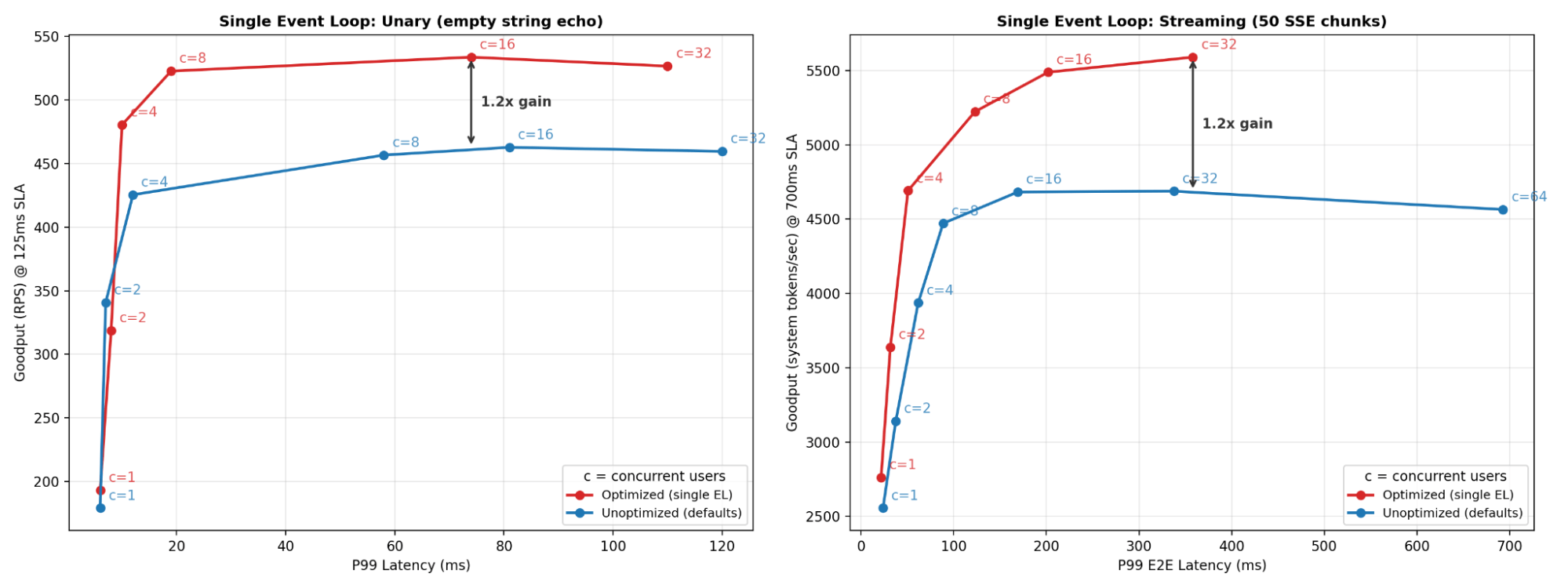

By default, Ray Serve runs user code and routing tasks on separate event loops to avoid blocking the main system event loop. This ensures blocking operations in user code do not impact the main system event loop. However, this introduces overhead that can affect performance for properly written async user code.

The RAY_SERVE_RUN_USER_CODE_IN_SEPARATE_THREAD and RAY_SERVE_RUN_ROUTER_IN_SEPARATE_LOOP environment variables let developers disable the separate event loops and avoid the additional task coordination overhead. Figure (12) shows the benefit: roughly 20% throughput improvement at the same latency SLA.

Figure (12): Performance of unary and streaming micro benchmarks comparing the effect of environment variables RAY_SERVE_RUN_USER_CODE_IN_SEPARATE_THREAD and RAY_SERVE_RUN_ROUTER_IN_SEPARATE_LOOP on an application composed of two chained deployments, each with a single replica. We observe up to 1.2x throughput in the unary case with a no-op payload, as well as 1.2x throughput in the streaming case with 50 SSE chunks.

Figure (12): Performance of unary and streaming micro benchmarks comparing the effect of environment variables RAY_SERVE_RUN_USER_CODE_IN_SEPARATE_THREAD and RAY_SERVE_RUN_ROUTER_IN_SEPARATE_LOOP on an application composed of two chained deployments, each with a single replica. We observe up to 1.2x throughput in the unary case with a no-op payload, as well as 1.2x throughput in the streaming case with 50 SSE chunks.LinkMiscellaneous Request-Path Optimizations

Beyond the main gRPC and HAProxy architecture work, we shipped a set of request-path improvements that reduce per-request overhead in Python, networking, and runtime orchestration. Together they deliver meaningful latency and throughput gains under load.

LinkMetrics work moved off the critical path

Metrics are useful only if they stay cheap. We reduced request-path metrics overhead by caching and batching updates in replicas and routers, so request execution spends less time in instrumentation and more time in user code. This is especially noticeable at high concurrency, where synchronous metric recording can otherwise become a measurable tax. PRs: #49971, #55897

LinkLogging overhead reduced with buffering and cached request context

Serve now buffers request logs and reuses cached context in hot logging paths, which cuts repeated formatting and I/O work per request. We also fixed buffered-context correctness so per-request metadata remains accurate while still getting the batching win. PRs: #54269, #57245, #55166, #56094

LinkFewer async tasks per request in ingress paths

A concrete optimization was reducing how many asyncio tasks are created per request. In the optimized ingress replica path, Serve now skips extra task orchestration when disconnect handling is disabled and no timeout is configured, and directly awaits user code instead of always spawning separate request/receive tasks. In practice, this trims event-loop scheduling overhead and improves throughput by avoiding unnecessary task lifecycle work on the hot path.

LinkUnary gRPC for unary-style request flows

Not every gRPC request needs streaming machinery. We added unary-unary handling for rejection-capable paths, avoiding streaming-style orchestration costs when the workload is fundamentally request/response. This lowers per-request overhead and improves throughput for common unary RPC patterns.

LinkFastAPI dependency-resolution optimization (self/injected instance path)

One high-impact fix was making get_current_servable_instance asynchronous so FastAPI can await it directly instead of offloading dependency resolution to a threadpool. This removes unnecessary threadpool scheduling from every request on that path and materially improves latency and RPS for FastAPI ingress workloads. PR: #55457

LinkRouter data-structure optimizations

The request router was updated to use more efficient in-memory structures (including O(1) lookup-oriented paths) and lower-allocation flows. This reduces routing overhead per request, especially as replica count and traffic parallelism increase. PR: #60139

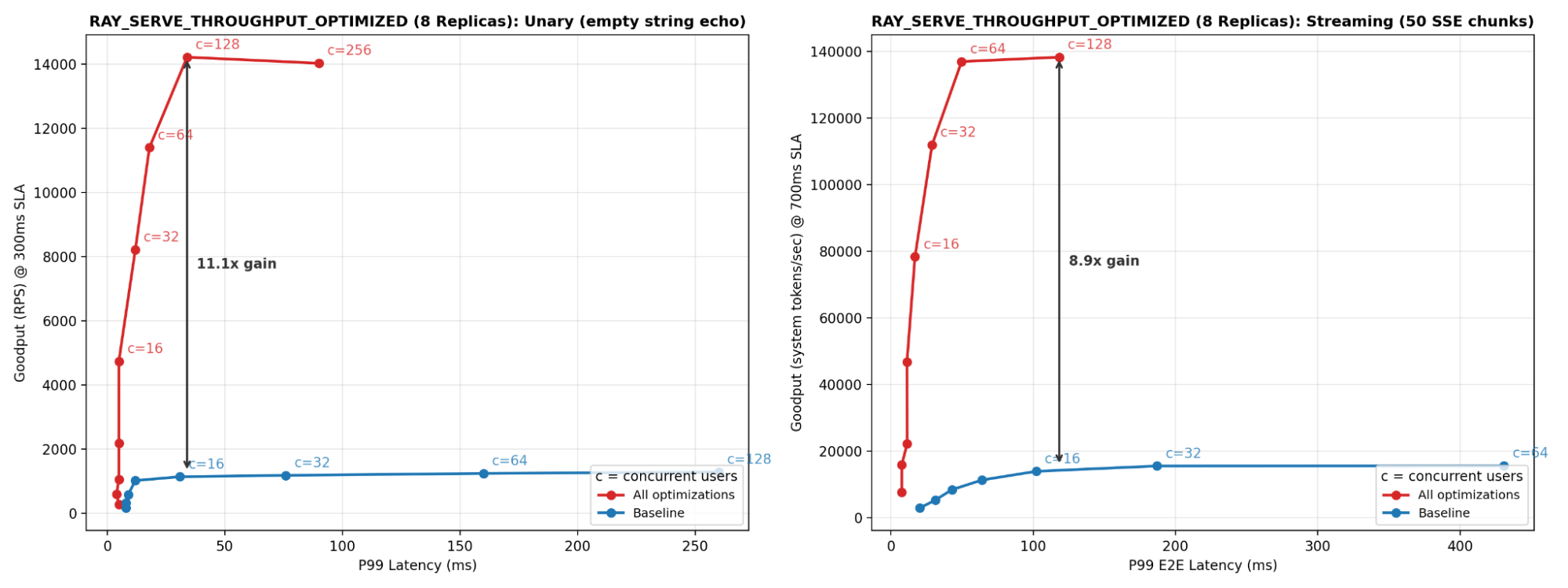

Throughput optimizations that are not yet set by default are organized under a single flag, RAY_SERVE_THROUGHPUT_OPTIMIZED, which can be seen here in the code. The effect of enabling all these, in combination with HAProxy, is shown in Figure (12). The applications tested for Figure (12) are also two deployment composites, but each has been scaled up to eight replicas to show Ray Serve’s increased per-node throughput capability.

Figure (12): Performance of unary and streaming micro benchmarks comparing the effect of RAY_SERVE_OPTIMIZED_THROUGHPUT on a scaled-up version of the deployments from the previous plots. We observe up to 11.1x throughput in the unary case with a no-op payload, as well as 8.9x throughput in the streaming case with 50 SSE chunks.

Figure (12): Performance of unary and streaming micro benchmarks comparing the effect of RAY_SERVE_OPTIMIZED_THROUGHPUT on a scaled-up version of the deployments from the previous plots. We observe up to 11.1x throughput in the unary case with a no-op payload, as well as 8.9x throughput in the streaming case with 50 SSE chunks.LinkConclusion

We discussed several new optimizations at different layers to significantly improve the throughput and latency of Ray Serve applications. Most notably, with HAProxy ingress and interdeployment gRPC enabled, a two-stage DLRM pipeline goes from 490 to 1,573 QPS at 75% lower P99 latency. For LLM inference using Ray Serve LLM, we maintain linear per-replica throughput while scaling replicas within a node. All of the code for reproducing the above results is available here.

We are working toward making gRPC and HAProxy the default for all interdeployment communication. See the RFC for design details and discussion.

LinkJoin the community

Join Ray Slack! Link available here.

Anyscale and Google partnered to identify demand for this improvement. We've seen firsthand how latency bottlenecks in Ray Serve have limited its ability to scale for low-latency inference workloads. With HAProxy ingress and native gRPC on the data plane, this gap will be closed. We’re excited to help take users from development to production scale on Ray Serve.

Table of contents

- Introduction

- Case Study: Recommendation Systems

- Case Study: LLM Inference

- Scaling Number of Replicas per Node

- Deep Dives

- Benchmarking Setup

- Optimizing Interdeployment Communication

- Optimizing Ingress Overhead

- Single Event Loop

- Miscellaneous Request-Path Optimizations

- Metrics work moved off the critical path

- Logging overhead reduced with buffering and cached request context

- Fewer async tasks per request in ingress paths

- Unary gRPC for unary-style request flows

- FastAPI dependency-resolution optimization (self/injected instance path)

- Router data-structure optimizations

- Conclusion

- Join the community