Introducing Vision-Language Reinforcement Learning in SkyRL

Today, we’re announcing full stack support for vision-language model post-training in SkyRL. Teams can now train multimodal models across supervised fine-tuning and reinforcement learning workflows, using the same scalable post-training infrastructure for language models in SkyRL.

With out of the box scalable recipes, anyone can get started building custom vision-language post-training. Bringing an existing multi-modal Tinker recipe? Run it with SkyRL:

# Self-hosted Tinker service

uv run --extra tinker --extra fsdp -m skyrl.tinker.api \

--base-model $MODEL

--backend fsdp

TINKER_API_KEY=tml-dummy uv run -m your_recipe \

base_url=http://localhost:8000Alternatively, you can also train multimodal models via SkyRL’s python-based entrypoints.

Here’s an example for our agentic training recipes on VisGym Maze2D:

Figure 1: Example Maze2D agent evaluation from the end of training. The agent (blue dot) learns to navigate the maze, successfully reaching the red square.

Figure 1: Example Maze2D agent evaluation from the end of training. The agent (blue dot) learns to navigate the maze, successfully reaching the red square.Multimodal Workloads

Post-training workloads are becoming increasingly complex and multimodal. Tasks like computer use, robotics, and other visual agentic tasks, require multi-step visual reasoning. Agents process visual observation, take actions, and respond to environmental feedback. In SkyRL, supporting these workloads means bringing vision-language models into the post-training stack as first-class citizens, across supervised fine-tuning and reinforcement learning workloads.

Supported Recipes

SkyRL now covers the progression from supervised fine-tuning to multi-turn, multi-image agentic reinforcement learning. In particular:

VLM SFT through Tinker, enabling recipe-driven multimodal supervised fine-tuning.

Agentic VLM RL, for multi-step image-grounded reasoning tasks with tool calling.

Multi-image agentic VLM RL, for interactive visual environments where models must observe, act, and adapt over time.

Async execution and LoRA-based training options for practical multi-turn VLM RL workloads, inheriting the scalable optimization of SkyRL.

Aligning inference and training for VLM RL

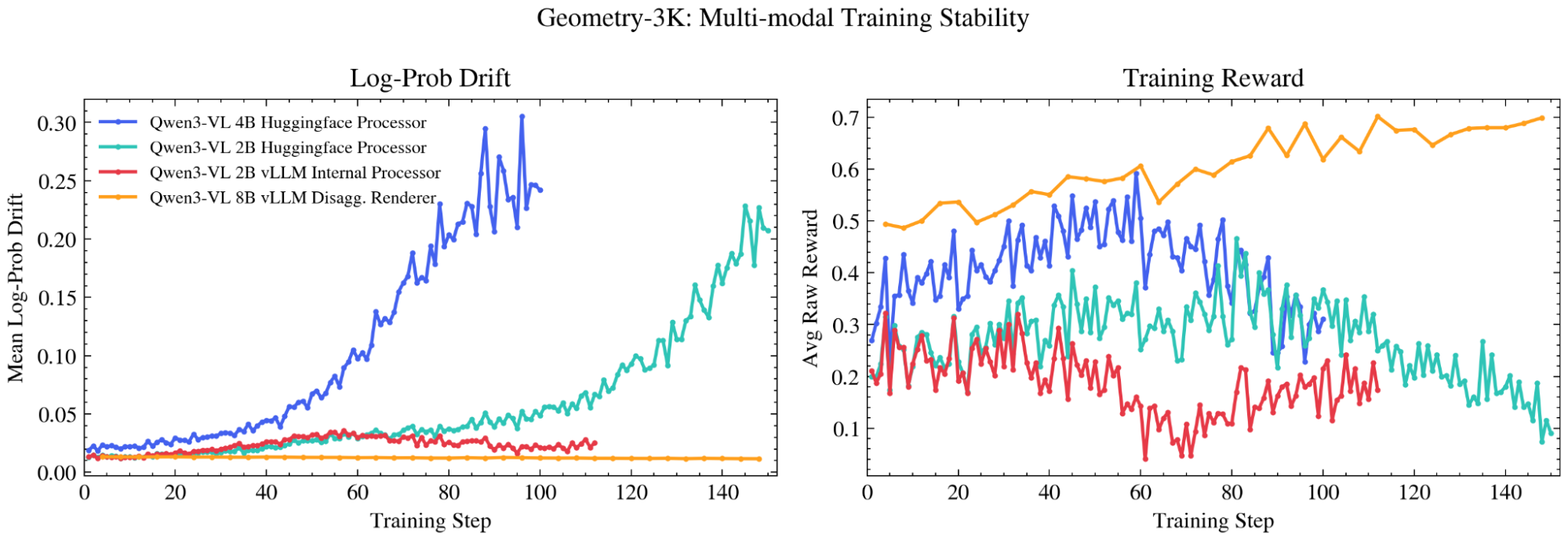

A core challenge in reinforcement learning is ensuring that rollout generation and train-time scoring agree on sequence construction and log probabilities. In early implementations, we saw severe log probability discrepancies between inference and training, driven by differences in multimodal input processing and numerical precision between the inference engine and training stack. In contrast to text tokens which are integer values, small changes in pixel values can cause large deviations in outputs, destabilizing training.

To minimize drift between training and inference, we use the inference stack, here vLLM, as the source of truth for tokenization and input preparation. Specifically, we helped implement disaggregated multi-modal rendering and generation. In this design, clients first use the /v1/chat/completions/render endpoint to convert OpenAI chat input into tokenized text and multi-modal outputs. Then /inference/v1/generate is used for a token-in-token-out generation.

Figure 2: Effect of different multi-modal processing pipelines on Geometry-3k training dynamics.

Figure 2: Effect of different multi-modal processing pipelines on Geometry-3k training dynamics.The disaggregated approach ensures consistency between both the rollout engine and training backend. In Figure 1, we visualize alternatives using the HuggingFace processor or repurposing vLLM’s internal input processor abstraction. Both lead to log probability drift, as well as reward collapse. Scaling model size from 2B to 4B makes the problem worse. Using the disaggregated approach that separates concerns at an API level, SkyRL maintains stability even at larger model sizes.

This abstraction helps ensure rollouts remain on-policy, while removing tokenization and input processing work from the training workers. As multimodal data processing becomes increasingly complex and computationally expensive, this allows for independent scaling of CPU workers to maintain GPU throughput.

From SFT to Multi-turn agentic RL

VLM supervised fine-tuning through Tinker

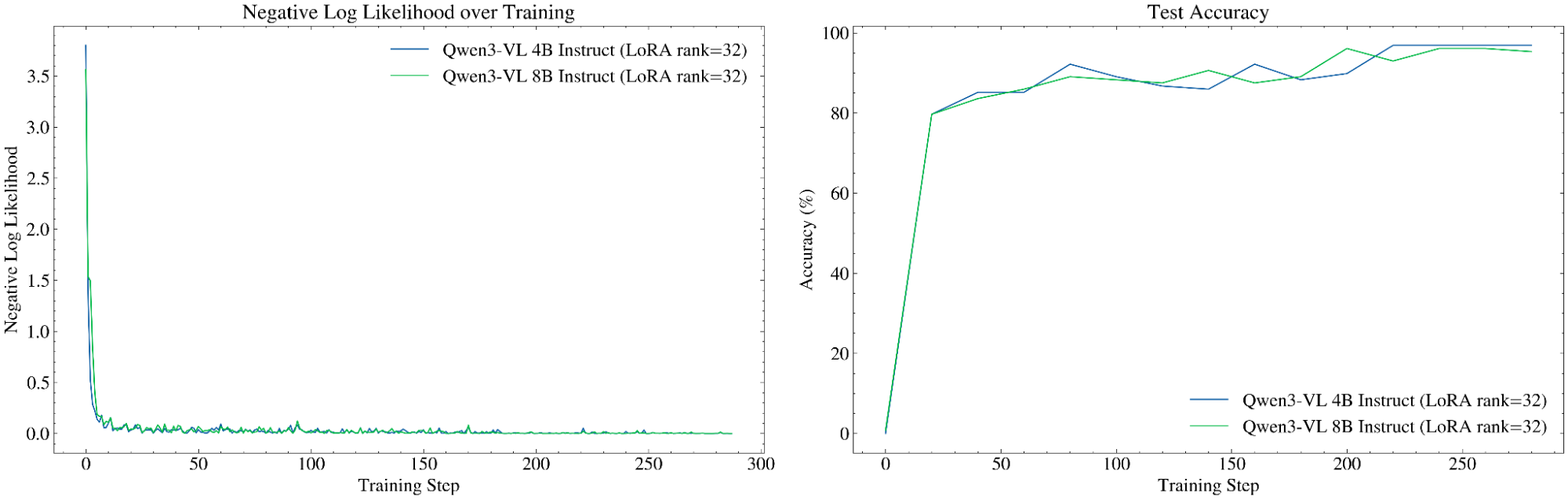

Through the Tinker interface, users can run supervised fine-tuning workflows for VLMs using the SkyRL training backend. As a simple example, an out-of-the-box Tinker classification SFT recipe runs end-to-end on SkyRL.

# Start a local tinker server with the target base model

uv run --extra tinker --extra fsdp -m skyrl.tinker.api \

--base-model "Qwen/Qwen3-VL-2B-Instruct"

--backend fsdp

--backend-config '{"trainer.use_sample_packing": false}'

# Clone and run the target Tinker compatible cookbook against the local server.

git clone https://github.com/thinking-machines-lab/tinker-cookbook.git

cd tinker-cookbook

TINKER_API_KEY=tml-dummy uv run -m recipes.vlm_classifier.train \

dataset=caltech101 \

renderer_name=qwen3_vl \

model_name=Qwen/Qwen3-VL-2B-Instruct \

base_url=http://localhost:8000 Figure 3. VLM supervised fine-tuning through Tinker on the SkyRL training backend. NLL loss (left) and test accuracy (right) through training.

Figure 3. VLM supervised fine-tuning through Tinker on the SkyRL training backend. NLL loss (left) and test accuracy (right) through training.Agentic VLM RL - Geometry 3k

SkyRL supports reinforcement learning for agentic vision-language tasks with tool calls. To demonstrate this, we use Geometry3k [1], a visual geometry reasoning dataset where the model must solve geometric problems. The model is provided with a single image, and must reason through what it sees.

./examples/train/geometry3k/run_geometry3k.sh Figure 4. Example rollouts from Qwen3-VL 8B Instruct on Geometry-3k. Through training, the agent learns to call tools and incorporate feedback when answering.

Figure 4. Example rollouts from Qwen3-VL 8B Instruct on Geometry-3k. Through training, the agent learns to call tools and incorporate feedback when answering.Multi-Image Agentic VLM RL

In complex use cases (e.g., computer use tasks) the environment responds to the actions of the agent. At every step, a new visual state is rendered, reflecting the state of the environment. These tasks are particularly challenging since the model keeps the history of observations in context and must both spatially reason and temporally reason over the frames.

./examples/train/visgym/run_mazed2d_sft.shTo demonstrate a full end-to-end workflow in SkyRL, we use VisGym, a suite of 17 multi-step visually interactive environments. VisGym [2] spans tasks including symbolic puzzles, real-image understanding, navigation, and manipulation. Instead of producing a single response, the model must repeatedly interpret visual state, take actions, and adapt over multiple steps based on feedback from the environment. This makes VisGym environments a good test environment representative of more complex tasks. VisGym is a natural testbed for SkyRL’s multi-turn agentic VLM RL support, including async execution and LoRA-based training.

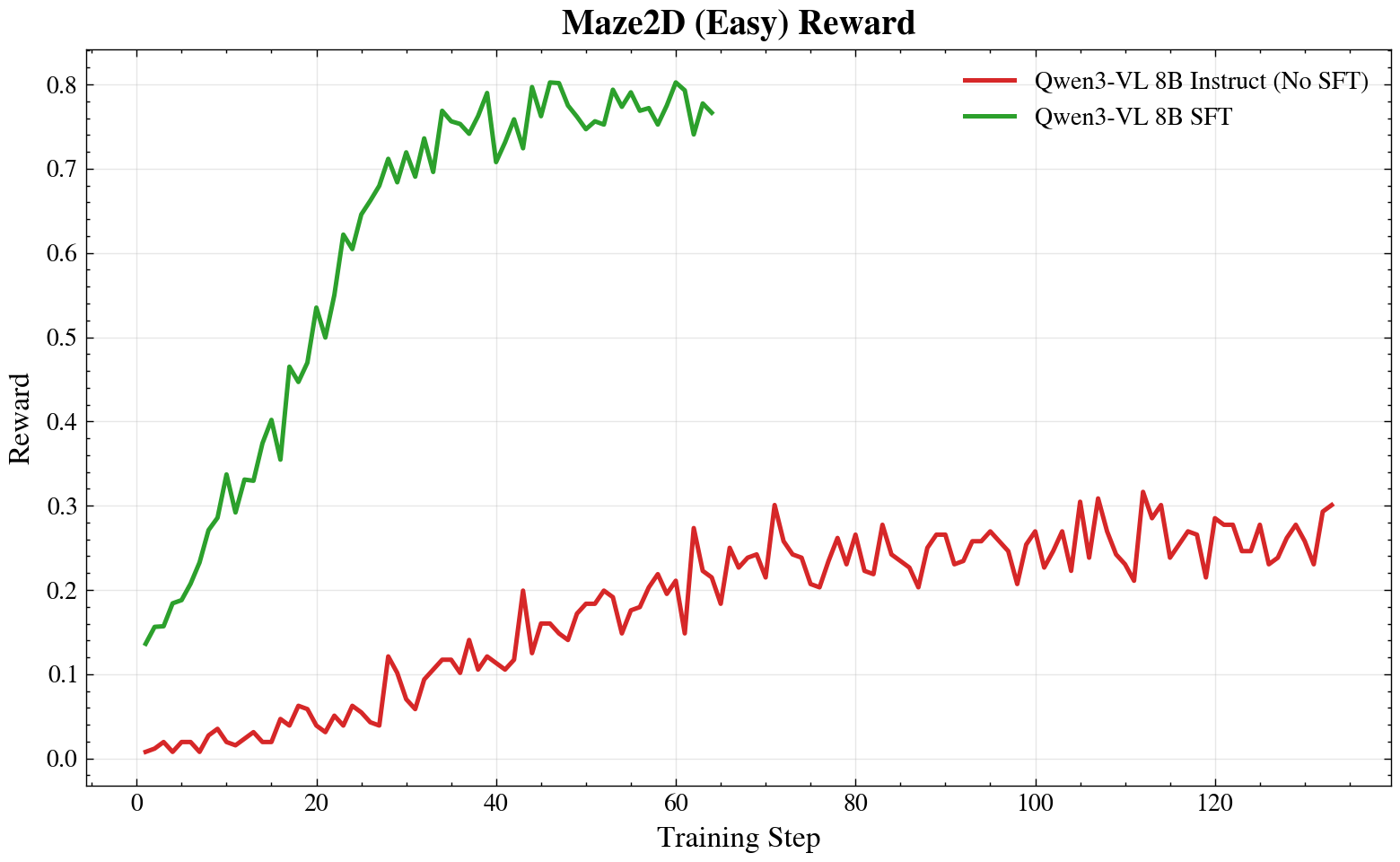

Figure 5: Reward curves on Maze2D from VisGym. Green: Model initialized from an SFT checkpoint. Red: Qwen 3-VL 8B Instruct trained without SFT.

Figure 5: Reward curves on Maze2D from VisGym. Green: Model initialized from an SFT checkpoint. Red: Qwen 3-VL 8B Instruct trained without SFT. Figure 6: Sample Maze2D rollouts on a held-out environment seed from Qwen3-VL 8B Instruct (No SFT) model.

Figure 6: Sample Maze2D rollouts on a held-out environment seed from Qwen3-VL 8B Instruct (No SFT) model.

Try it out now:

You can get started with VLM training either through the Tinker interface or directly from SkyRL. We would love our user community to push the scale of applying SkyRL to vision-language tasks and provide feedback to us. You can get started with our tutorial and introduction.

Future Roadmap:

Multimodal support is still early and we have several major features planned, including sequence packing, Megatron backend support, long context training with context parallelism, and step-wise training. Now is a great time to get involved in multimodal training, and we welcome community contributions!

[1] Lu, P., Gong, R., Jiang, S., Qiu, L., Huang, S., Liang, X., & Zhu, S.C. (2021). Inter-GPS: Interpretable Geometry Problem Solving with Formal Language and Symbolic Reasoning. In The Joint Conference of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (ACL-IJCNLP 2021).

[2] Wang, Z., Zhang, J., Ge, J., Lian, L., Fu, L., Dunlap, L., Goldberg, K., Wang, X., Stoica, I., Chan, D. M., Min, S., & Gonzalez, J. E. (2026). VisGym: Diverse, Customizable, Scalable Environments for Multimodal Agents. https://arxiv.org/abs/2601.16973

Recommended content

20x Faster Training Data Reads with Alluxio and Ray Data: A Cross-Region Benchmark

Read more

Anyscale on Azure Enters Public Preview: Build and Deploy AI at Scale Inside Your Own Azure Tenant

Read more