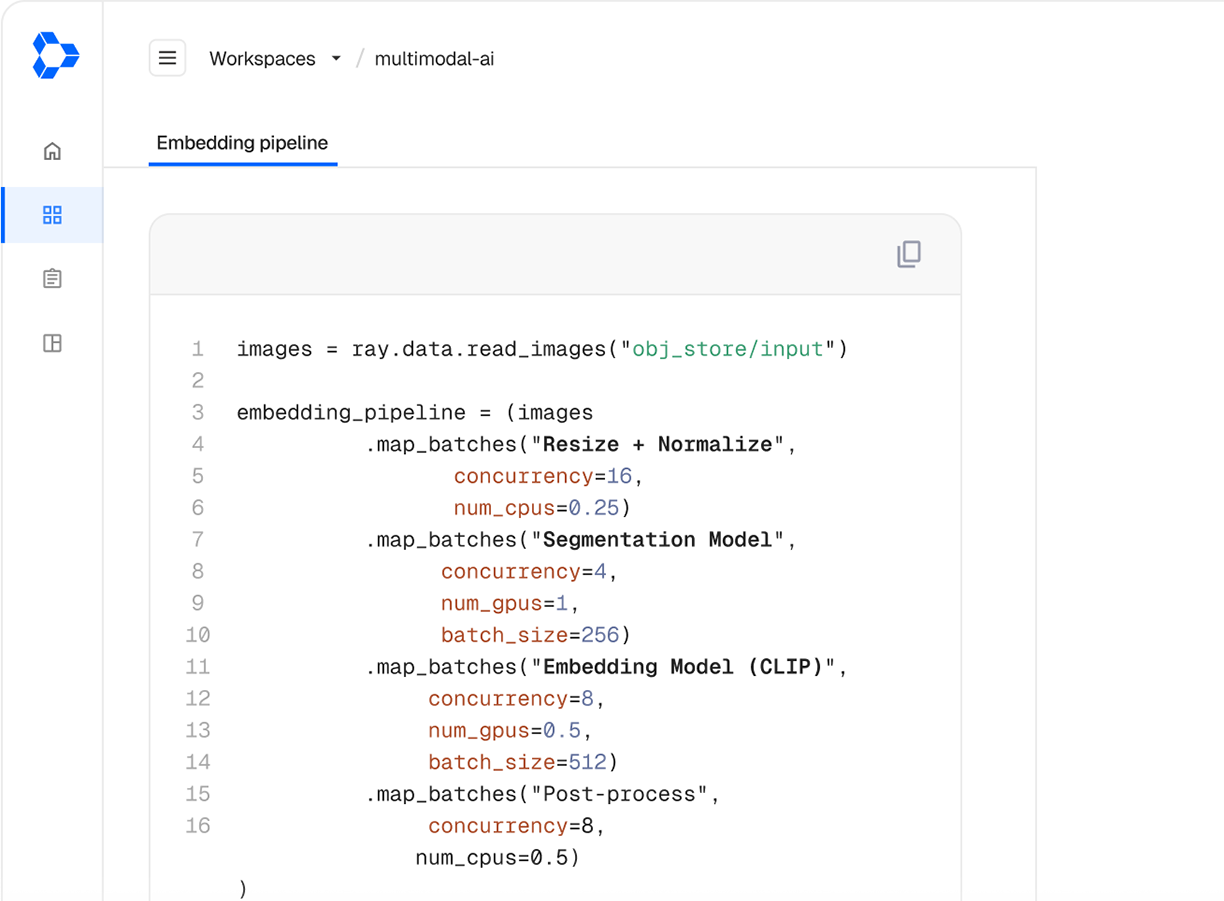

Data processing

Embedding generation

Process text, images, and other data modalities using unified CPU preprocessing and GPU inference with Ray on Anyscale.

Scale embedding computation for any data modality

Run batch and real-time embedding generation with high efficiency and scale with Ray on Anyscale.

Bring your own hardware

Run fast, fault-tolerant embedding pipelines on your own infrastructure so data stays in your environment.

Increase GPU utilization

Stream data from CPU preprocessing and GPU inference with simple Python APIs to keep hardware busy.

Offline and Online computation

Turn any embedding model into a production batch pipeline or a production API endpoint.

Anyscale removes the friction around environment management and scaling, so our teams can focus on delivering fast, intelligent experiences to our users.”

Scheduling heterogeneous workloads is something we couldn’t really do easily before. We see much lower idle time and much better utilization.”

Anyscale removes the friction around environment management and scaling, so our teams can focus on delivering fast, intelligent experiences to our users.”

3x

Faster deployment of embedding pipelines

Batch and real-time embedding generation pipelines that scale

Ray on Anyscale abstracts infrastructure complexity so you can focus on your AI application

Streaming execution

Maximize throughput with continuous processing across different stages vs. batch execution in traditional systems

Unified CPU+GPU pipelines

Leverage CPUs for data loading and tokenization and GPUs for model inference on batch or online inference

APIs that abstract infra

Write AI applications with intuitive APIs that under the hood optimize throughput, latency, and scaling

Advanced observability

Use tree and DAG dashboard views pinpoint bottlenecks and errors for faster debugging and optimization

Job-level checkpointing

Resume from previous state without reprocessing already completed data after pause or failure

Production readiness

Deploy latency-optimized pipelines with alerting, monitoring, autoscaling, and fault tolerance built-in

Build. Run. Scale. Repeat.

Deploy advanced AI applications without growing operational complexity with Ray on Anyscale.

Explore more on Anyscale

Multimodal data pipelines

Transform complex data modalities such as video, images, voice, text, and more into AI-ready datasets

Distributed training, fine-tuning

Scale existing training code from one machine to thousands of GPUs with intuitive scaling configs

Composite AI serving

Serve one or many models and Python applications working together as a single API endpoint