Model Training

Distributed training & fine-tuning

Scale training from one to thousands of GPUs using your ML framework of choice with Ray on Anyscale.

Iterate faster on large-scale datasets and models

Scale existing training code from one machine to tens or thousands of GPUs with minimal configuration.

Interactive development at scale

Leverage Anyscale Workspaces for interactive development and debugging distributed training runs.

Unlock your data

Unify data preprocessing at scale with model training to iterate quickly and keep GPUs busy.

Accelerate debugging

Pinpoint performance bottlenecks with one-click CPU and GPU profiling on live training jobs.

Anyscale lets us scale both experimentation and the number of developers running experiments all without being slowed down by infrastructure complexity ”

With Anyscale, we have no ceiling on scale, and an incredible opportunity to bring AI features and value to our 170 million users ”

Ray and Anyscale aligned with our vision: to iterate faster, scale smarter, and operate more efficiently.”

Anyscale lets us scale both experimentation and the number of developers running experiments all without being slowed down by infrastructure complexity ”

10x

Larger datasets used for VLA model training

Unified compute for preparation, training, and post-training at scale

Ray on Anyscale abstracts model training infra complexity so you can focus on development

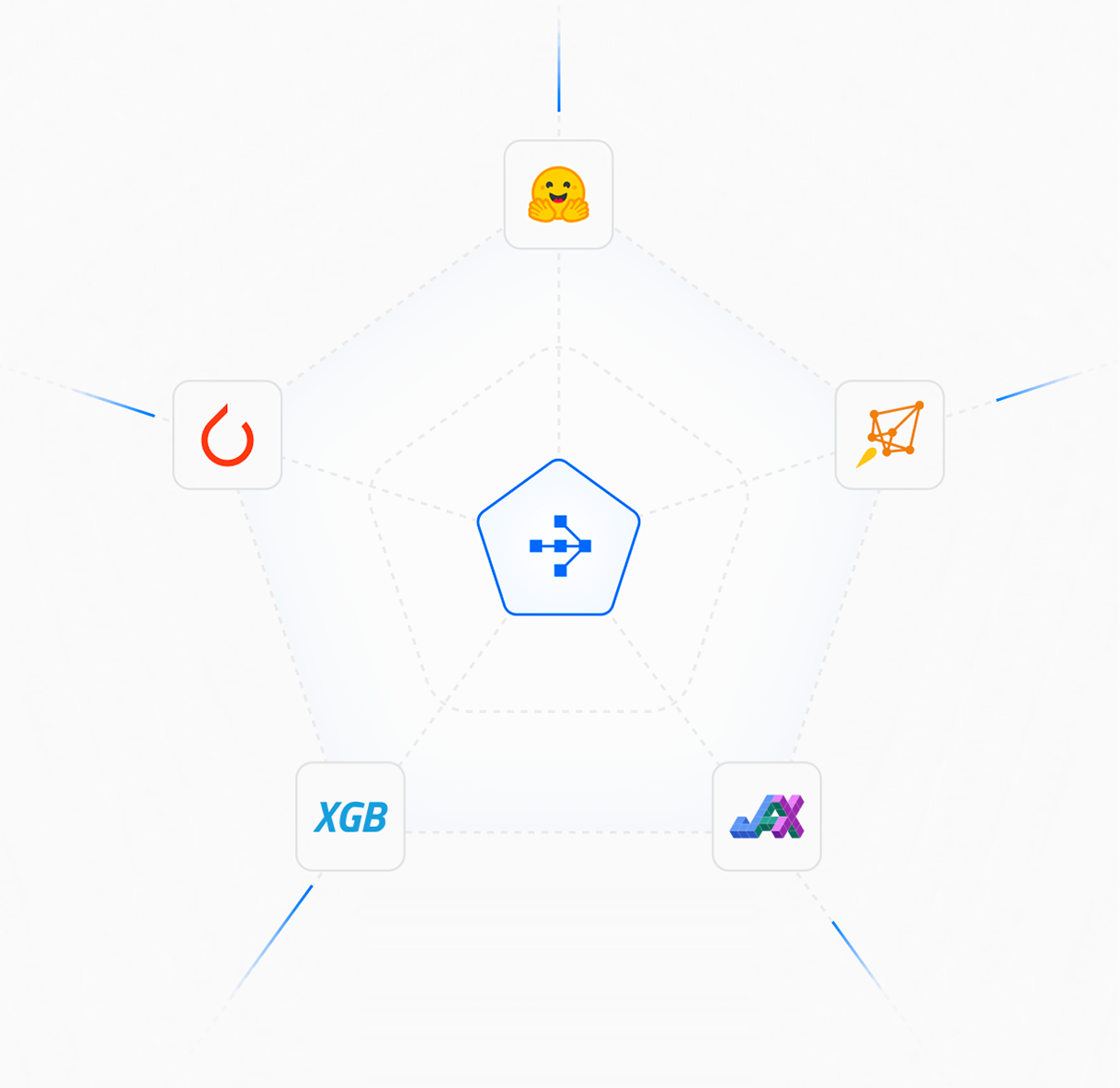

Multi-framework support

Scale PyTorch, XGBoost, Hugging Face, Jax or Tensorflow model training across nodes

Training infra observability

Profile CPU and GPU performance in distributed runs with persistent logs and integrated dashboards

Mid-epoch resumption

Resume training from intermediate progress after node failure or other interruption

Automatic lineage tracking

Track dataset and model relationships with built-in lineage mapping and MLFlow integration

Unified runtime

Run data processing, parallel data loads, and distributed training / fine-tuning on a single managed runtime

Advanced orchestration

Manage multiple teams and projects with multi-cloud, priority-aware scheduling and built-in budgets

Build. Run. Scale. Repeat.

Scale data and training steps without growing operational complexity with Ray on Anyscale.

Distributed deep learning with PyTorch on Ray

Scale end-to-end experimentation with scalable data prep and training

Distributed Visual Language Action (VLA) models

Build advanced physical AI systems with multimodal datasets

Distributed LLM fine-tuning with DeepSpeed

Combine the power of Ray Train and DeepSpeed for LLM customization

Explore more on Anyscale

Multimodal data pipelines

Transform complex data modalities such as video, images, voice, text, and more into AI-ready datasets

Composite AI serving

Serve one or many models and Python applications working together as a single API endpoint

Embedding generation

Process large-scale multimodal datasets for AI and applications with your model of choice