Model Serving

Composite AI serving

Streamline operations for complex inference services that mix models and Python code with Ray on Anyscale

Scalable processing for multi-step, online inference

Deploy multi-model, heterogeneous (CPU+GPU) inference pipelines as a single service

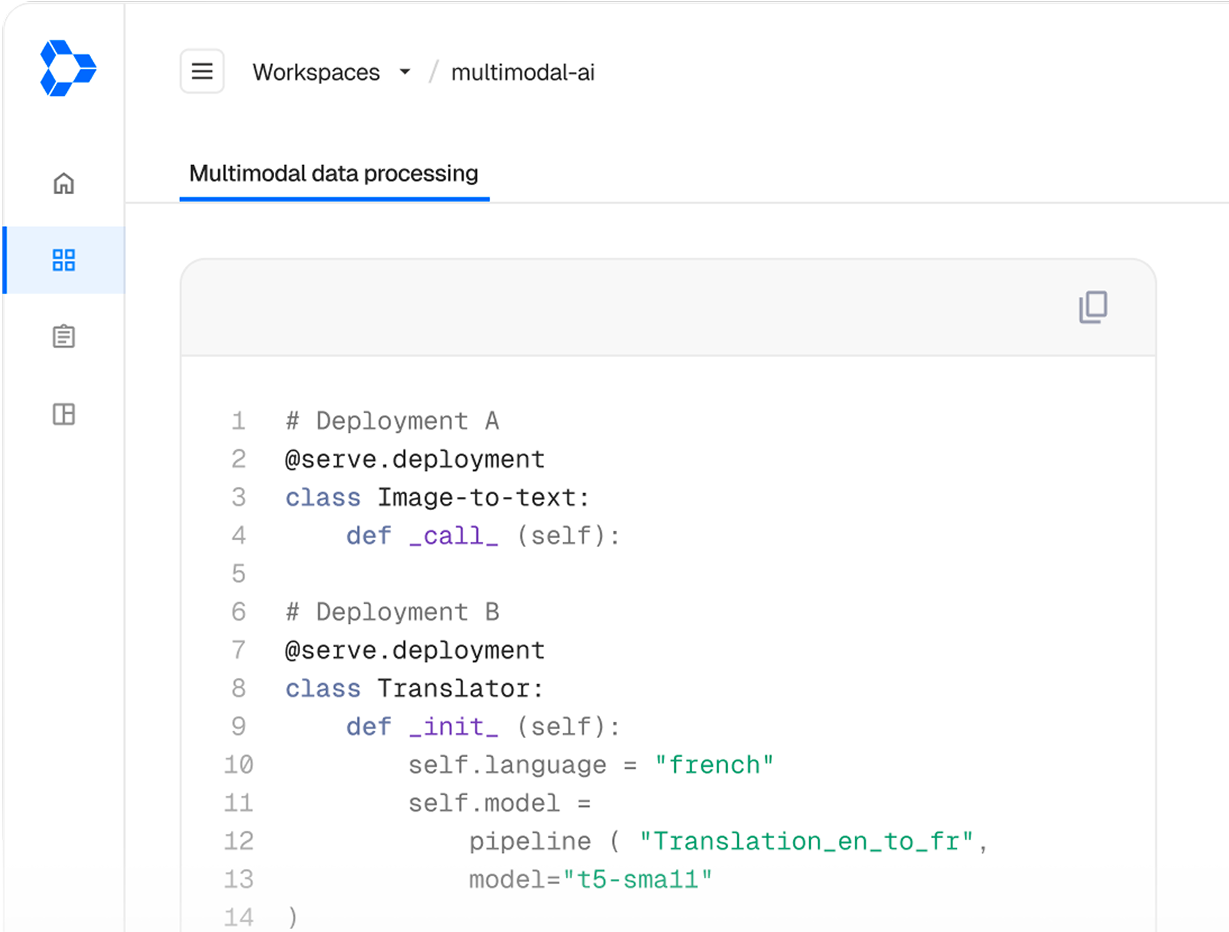

Deploy services with Python

Define multi-model, multi-step AI services using familiar Python, no infrastructure orchestration

Scale models of any size

Distribute anything from lightweight models to large foundation models using any inference framework

Streamline operations

Cluster autoscaling, service upgrades, blue/green rollouts, and A/B testing are handled automatically

We needed a solution that could scale horizontally with our growth while maintaining strict low-latency performance requirements for our users. Anyscale was the answer. ”

One of our applied AI engineers said - we should use this model - and the next day it was running in production. Before Anyscale, that would’ve taken a week or more. ”

Ray and Anyscale aligned with our vision: to iterate faster, scale smarter, and operate more efficiently.”

We needed a solution that could scale horizontally with our growth while maintaining strict low-latency performance requirements for our users. Anyscale was the answer. ”

3x

Faster model deployment for their multimodal search service

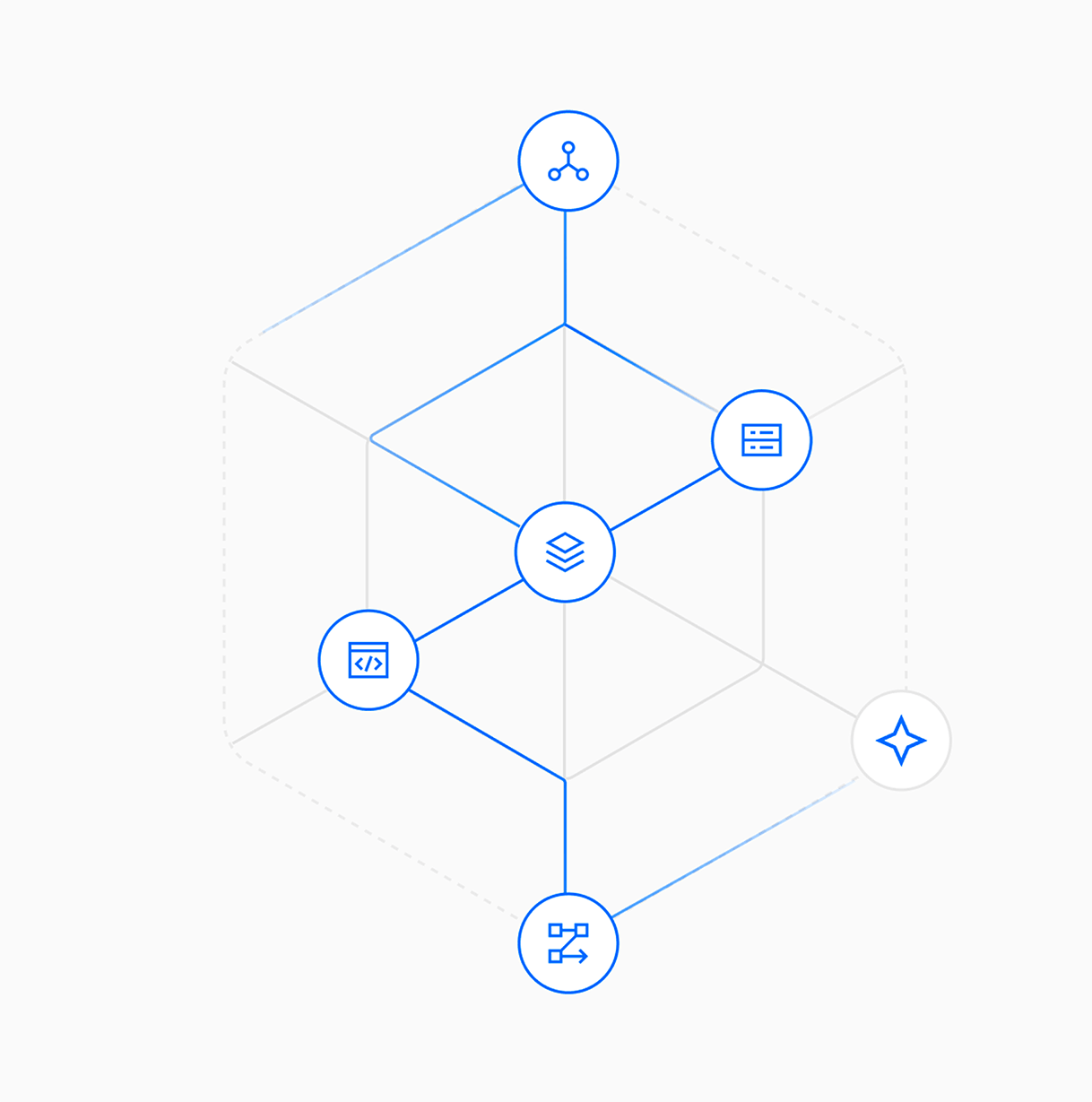

Unified execution for multi-model, inference workflows

Ray on Anyscale abstracts infra from complex inference services so you can focus on your application

Multi-framework support

Distributed high-performance inference with PyTorch, vLLM, SGlang, TensorRT, etc

Distributed LLM Inference

Serve massive models that span multiple nodes with automatic placement and coordination

Model multiplexing

Load multiple models into the same GPU memory and intelligently switch between them on the fly

Model composition

Chain multiple models to deliver richer, end-to-end AI applications from a unified endpoint

Independent scaling

Independently scale each component of a Ray Serve application – keeping performance high and costs low

Fractional GPU allocation

Maximize GPU utilization and reduce waste with fractional hardware allocations

Build. Run. Scale. Repeat.

Deploy advanced AI applications without growing operational complexity with Ray on Anyscale.

Explore more on Anyscale

Multimodal data pipelines

Transform complex data modalities such as video, images, voice, text, and more into AI-ready datasets

LLM Reinforcement Learning

Scale RL post-training from a single node to thousands of GPUs with veRL, skyRL and more.

Embedding generation

Process large-scale multimodal datasets for AI and applications with your model of choice