Post-training

Reinforcement Learning for LLMs

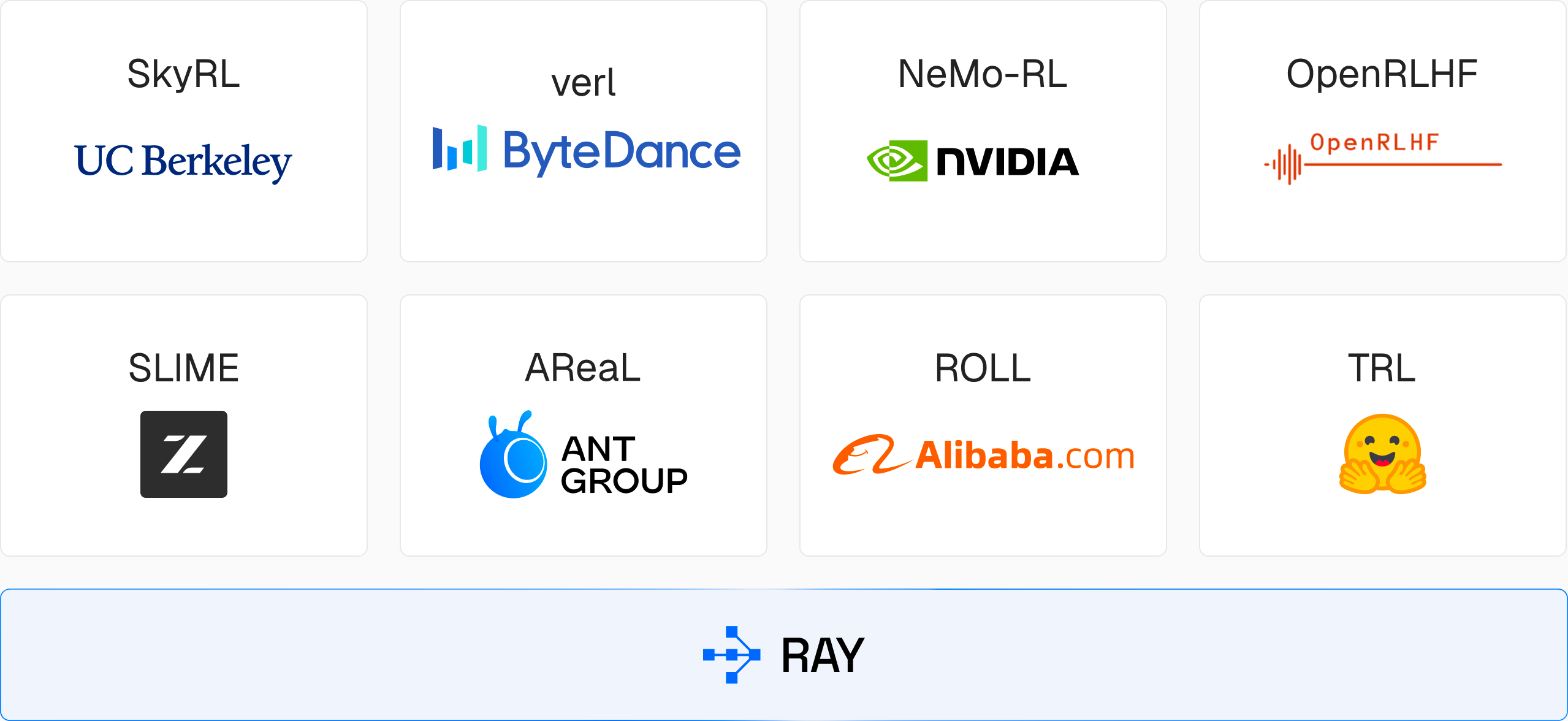

Scale RL post-training from a single node to thousands of GPUs with Ray, the engine for veRL, skyRL and more.

Scale RL with a unified engine for data, train and serve

Run the full post-training lifecycle on Ray, the world’s most widely adopted AI compute engine

End-to-end orchestration

Coordinate multiple frameworks running across CPU and GPU hardware with simple Python APIs.

Works with your RL library

veRL, SkyRL, OpenRLHF, and other leading RL libraries are built on Ray, no rewiring required.

Native AI framework integration

Ray works seamlessly with vLLM, SGLang, and Megatron to keep rollout generation fast and GPUs utilized.

We built custom training infrastructure leveraging PyTorch and Ray to power asynchronous reinforcement learning at scale.”

We built custom training infrastructure leveraging PyTorch and Ray to power asynchronous reinforcement learning at scale.”

4x

Token generation efficiency for model trained compared to Frontier models

Unified compute for reinforcement learning at scale

Ray on Anyscale abstracts RL infrastructure complexity so you can focus on development

Multi-framework support

Run veRL, SkyRL, OpenRLHF, NeMo-RL, and other leading RL libraries across any cluster size.

Inference engine integrations

Native support for vLLM and SGLang — the inference engines that power modern RL rollout generation.

Rack-aware scheduling

Optimize placement of training and inference workers across complex hardware topologies (in preview).

Agentic & multi-turn RL

Coordinate multi-step environments, tool use, and reward computation across complex agent trajectories.

One runtime for all stages

Eliminate fragmented tooling with data prep, fine-tuning, RL, and online inference on a single runtime.

Advanced observability

Profile CPU/GPU performance in distributed data, train or serve runs with persistent logs and dashboards.

Build. Run. Scale. Repeat.

Deploy advanced AI applications without growing operational complexity with Ray on Anyscale.

Explore more on Anyscale

Multimodal data pipelines

Transform complex data modalities such as video, images, voice, text, and more into AI-ready datasets

Distributed training, fine-tuning

Scale existing training code from one machine to thousands of GPUs with intuitive scaling configs

Composite AI serving

Serve one or many models and Python applications working together as a single API endpoint