Link1. The problem: feeding GPUs at scale with multimodal data

GPU utilization in production AI pipelines regularly falls below 50% - often not because the model is slow, but because the upstream data pipeline cannot produce data fast enough. Multimodal AI has made this worse: models now consume video, point clouds, audio, and text, each requiring resource-intensive preprocessing before a GPU can touch it. How do you process hundreds of terabytes of heterogeneous data without starving your GPUs?

GPUs are the critical resource: model weights must persist in GPU memory across batches, requiring long-lived stateful workers rather than one-shot invocations. Keeping those workers busy requires a continuous stream of preprocessed inputs.

The bottleneck is typically upstream: decoding H.264 video, voxelizing lidar point clouds, and transforming these inputs. These CPU-bound operations account for up to 65% of total epoch time on multimodal workloads, and GPU instances carry a limited number of CPUs, too few to sustain them during training or inference.

The solution is disaggregated streaming: supplementing the main GPU fleet with a dedicated preprocessing fleet that streams data directly to the main GPU workers without needing to write intermediates to storage. This post works through why simpler approaches fail and how Ray Data implements this model.

Link2. Two naive architectures and why they break

LinkThe cost of staged batch execution

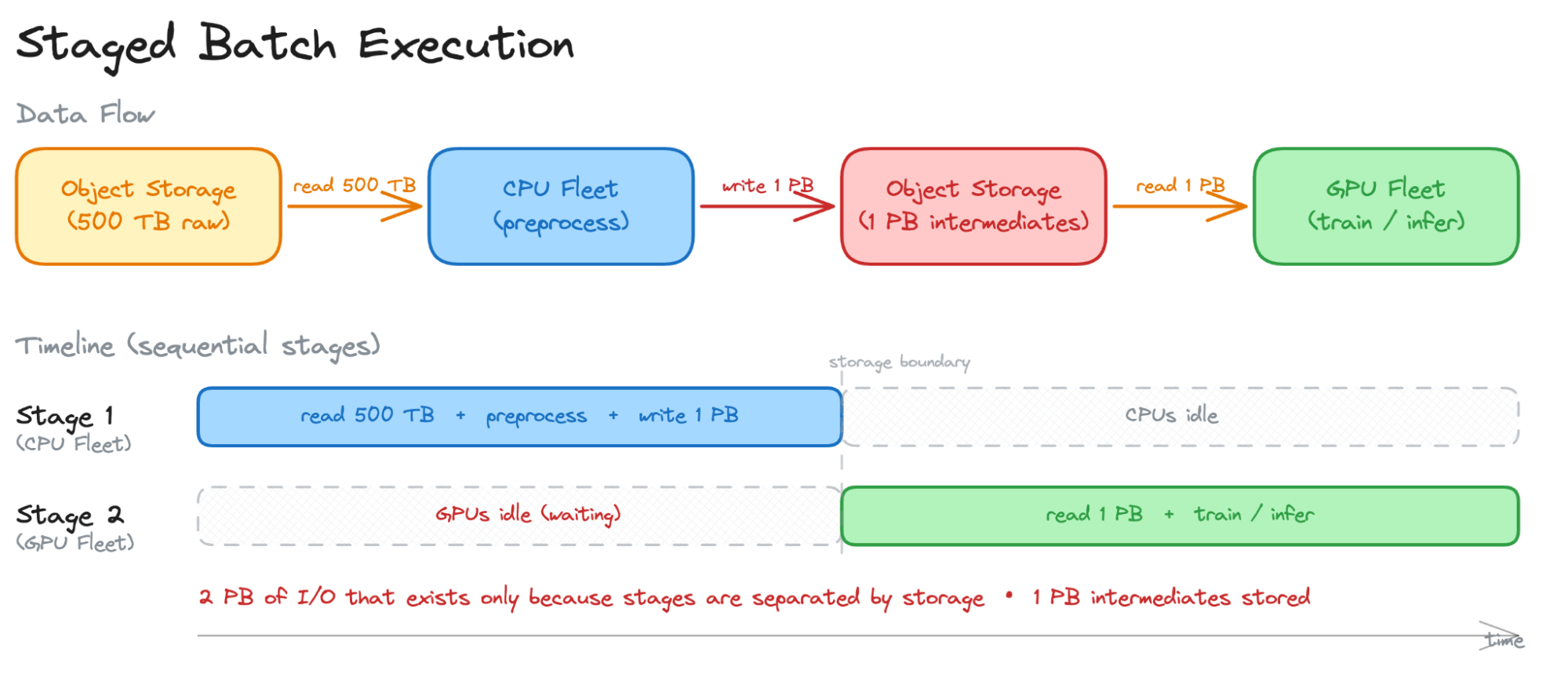

Staged batch execution is a traditional approach - familiar from Spark, MapReduce, and similar batch frameworks - which runs preprocessing and training as separate jobs, connected by storage.

The CPU fleet reads raw data, preprocesses it, writes the result back to storage, and then the GPU fleet reads it. Two storage round-trips exist purely because the execution model requires stages to be separated by a storage boundary. In practice, each stage is typically a separate containerized job connected by object storage.

Preprocessing often expands the data size - decoding compressed video into raw frames, tokenizing text, augmenting images - so intermediates can easily be 2–10x the raw size. A 500 TB raw dataset might produce 1–2.5 PB of intermediates that must be written and then read back.

Take the 500 TB example with 2x expansion (1 PB of intermediates), a 32-node CPU fleet, and an 8-node GPU fleet:

Read 500 TB raw from object storage: ~1.5 hours

Write 1 PB intermediates back to storage: ~3 hours

Read 1 PB intermediates on the GPU fleet: ~12 hours

Total: ~16.5 hours of I/O, during which either CPUs or GPUs are fully idle

Plus: storage costs for 1 PB of intermediates, request fees, cleanup

Intermediates are inspectable and replayable, which has real value for debugging. But the GPU fleet cannot begin until the CPU fleet has finished writing - a cold start that scales with dataset size. For workloads where augmentation transforms change each epoch, intermediates cannot be reused anyway: the full I/O cost is paid for data consumed exactly once.

LinkWhy single-node execution is suboptimal

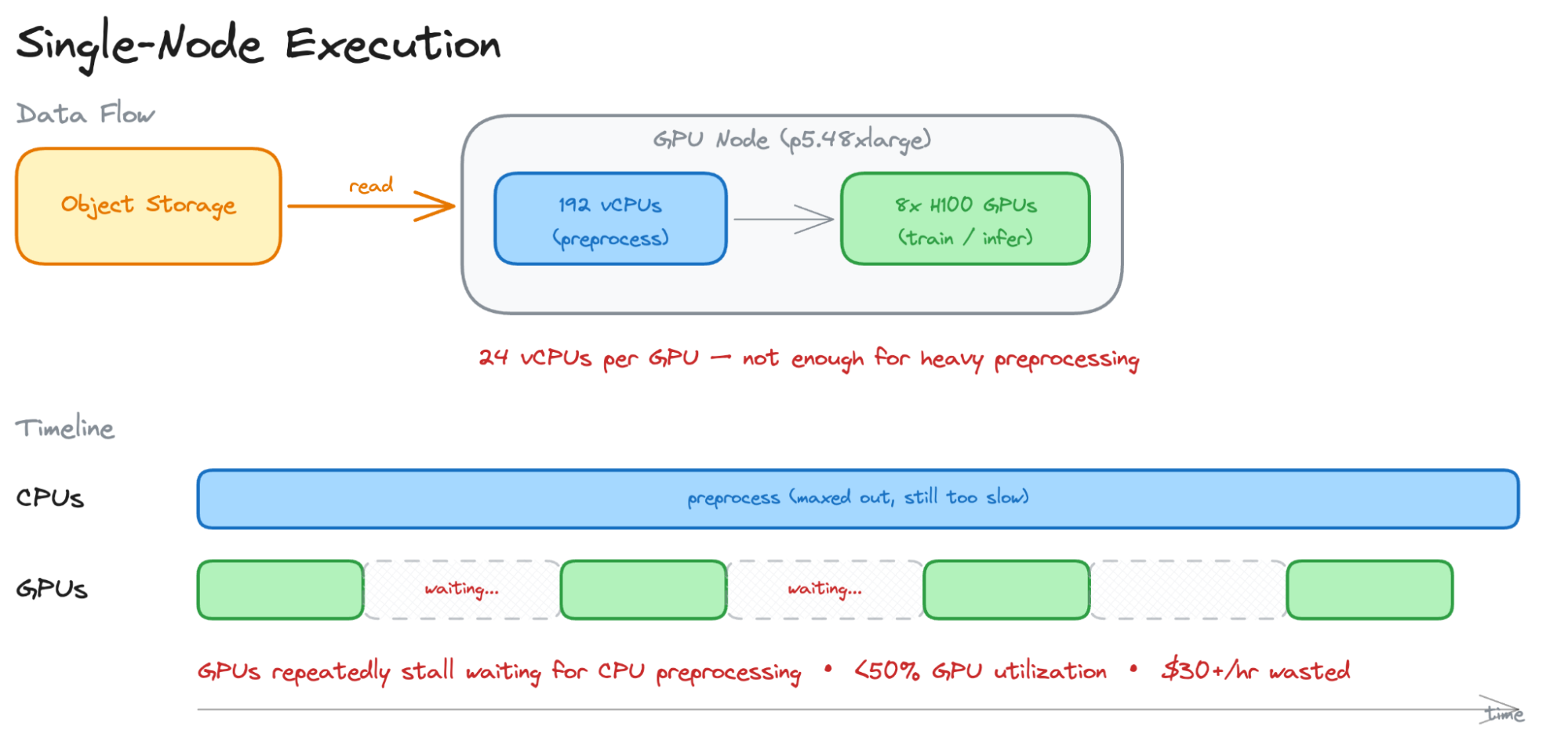

The natural instinct is to run the entire pipeline - read, preprocess, train - on the GPU node itself. No intermediate storage, no coordination overhead. But the CPU/GPU ratio on GPU instances makes this impractical for batch inference or training workloads with resource-intensive preprocessing.

Instance | vCPUs | GPUs | vCPUs per GPU |

p4d.24xlarge | 96 | 8× A100 | 12 |

p5.48xlarge | 192 | 8× H100 | 24 |

Twelve to twenty-four vCPUs per GPU. For workloads where preprocessing is light (reading pre-tokenized text, loading already-decoded images), this can be enough. But for multimodal preprocessing - decoding H.264 video frame by frame, projecting lidar point clouds, running OCR - a single vCPU might handle one stream at real-time speed or slower. Twelve vCPUs cannot keep one A100 fed, let alone justify the cost of an 8-GPU node.

For example, on a Vision Transformer inference workload processing over ~4 TiB of data, supplementing 40 GPU nodes with 64 dedicated CPU nodes yields 7x the throughput of Daft, which is bound to the CPU capacity the GPU instance provides.

This is the fundamental mismatch: GPU instances are designed to maximize GPU density, not CPU capacity. The GPU is there to orchestrate training or inference, not to run a preprocessing pipeline. Trying to do both means you either starve the GPUs (wasting $30+/hr of compute) or under-provision preprocessing (slowing the entire pipeline to CPU speed).

Link3. Disaggregated streaming

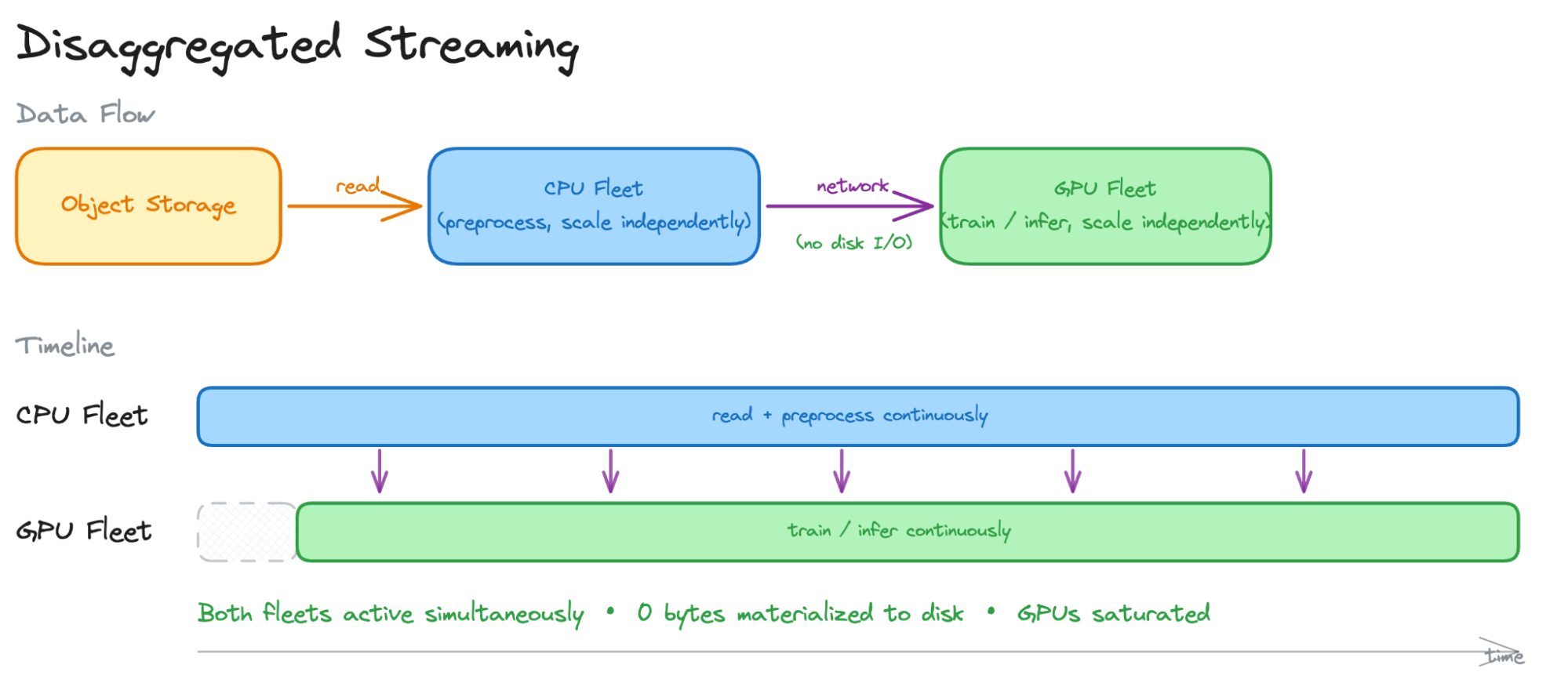

If batch execution wastes I/O and single-node execution wastes GPUs, the remaining option is to disaggregate: run preprocessing on a separate CPU fleet and stream the results directly to GPUs over the network.

One read from storage. Preprocessed data flows to GPUs directly as it is produced.

This model gives you three things at once:

No intermediate materialization. The ~15 hours of I/O from the batch execution model disappear. Intermediates exist only in memory buffers.

Independent CPU/GPU scaling. Need more preprocessing throughput? Add CPU nodes. Need more training or inference capacity? Add GPU nodes. The ratio is set by the workload, not by the instance spec sheet.

Overlapped execution. Preprocessing and training run concurrently. While GPU batch N is training, CPU nodes are already preparing batch N+1. No stage waits for the previous one to finish.

(In practice the separation is not absolute: most pipelines also leverage the CPUs on GPU nodes, or add a mid-tier GPU fleet of cheaper T4/L4 instances for acceleration stages that would compete for memory on training hardware. See this post on cutting down the cost of stable diffusion training as an example)

This reframes the design question: not "how do we store and retrieve intermediates faster," or "how do we fit more CPUs on a GPU node," but "how do we connect independent CPU and GPU fleets into a single pipeline without materializing intermediates?"

LinkWhat primitives does disaggregated streaming require?

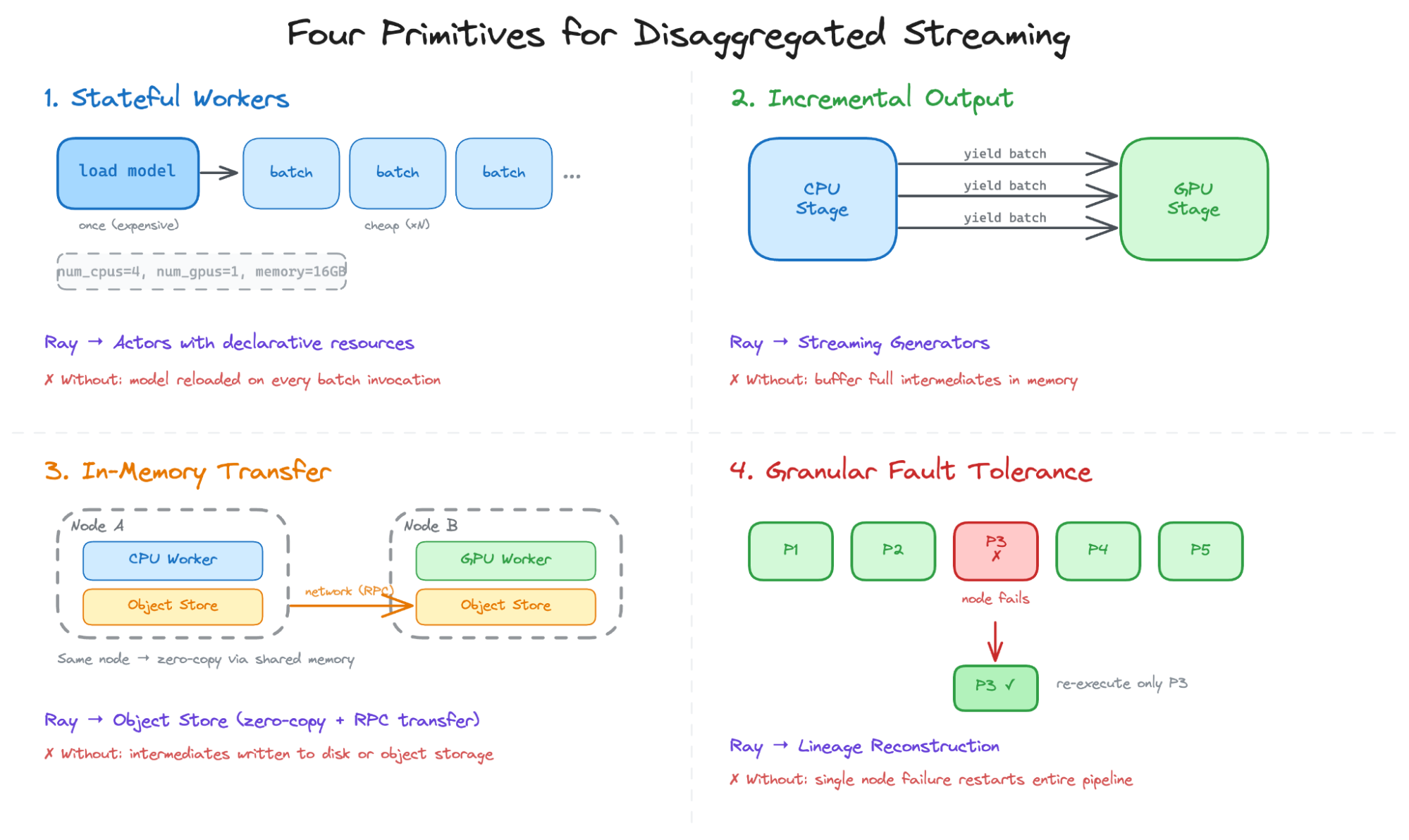

Any system that connects independent CPU and GPU fleets into a single pipeline without materializing intermediates needs four primitives.

Stateful workers with heterogeneous resource requests. Preprocessing stages load models - video decoders, OCR engines, tokenizers - that are expensive to initialize but cheap to apply per record. The system needs long-lived workers that load state once and process many batches, declaring resource requirements (CPU cores, GPUs, memory) so the scheduler can place them on appropriate hardware without manual machine mapping.

Incremental output with flow control. Workers must emit output batch-by-batch so downstream stages can consume immediately. Because stages run at different speeds, the system needs flow control to slow fast producers when slow consumers fall behind, preventing unbounded memory growth.

In-memory data transfer without disk materialization. When a CPU worker on node A produces a batch that a GPU worker on node B needs, the system must transfer it over the network without writing to disk or object storage.

Granular fault tolerance. Failures are expected in distributed systems. When a node goes down, only the lost partitions should be re-executed - not the entire pipeline.

These primitives are hard requirements. Remove stateful workers and you pay initialization on every batch. Remove incremental output and you buffer full intermediates. Remove in-memory transfer and you write them to disk. Remove granular fault tolerance and a single node failure restarts the entire pipeline. Serverless GPU platforms typically lack at least one of the four: each invocation reloads the model, returns output in full, has no shared memory or backpressure between functions, and offers no cross-invocation fault recovery.

Ray is one of the few systems that provides all four primitives together in a general-purpose way with a Pythonic interface:

Actors → stateful workers. Long-lived processes with declarative resource requirements.

Streaming generators → incremental output. Tasks

yieldresults batch-by-batch with built-in backpressure.Object store → in-memory transfer. Shared-memory access on the same node (zero-copy for PyArrow/NumPy via pickle 5), RPC-based transfer across nodes.

Lineage reconstruction → fault tolerance. Every object's lineage is tracked; a node failure re-executes only the lost partitions.

Ray provides the primitives. Ray Data composes them into a pipeline execution model.

Link4. Ray Data's streaming batch execution

LinkThe streaming batch model

Distributed data processing has traditionally split into two camps. Batch systems (Spark, MapReduce) can fuse operators within a stage but need to materialize intermediates at stage boundaries - good for fault recovery and elastic scaling, but not for pipelining across heterogeneous CPU and GPU stages. Stream processors (Flink, Naiad) pipeline data with backpressure - but statically bind operators to fixed resources, making rebalancing and fault recovery expensive.

Ray Data implements the disaggregated streaming architecture using a third execution model - streaming batch - that combines the strengths of both. The key idea: use partitions or blocks as the unit of execution (enabling lineage recovery and dynamic resource assignment, as in batch systems), but allow partitions to be dynamically created and streamed between operators (enabling memory-efficient pipelining, as in streaming systems).

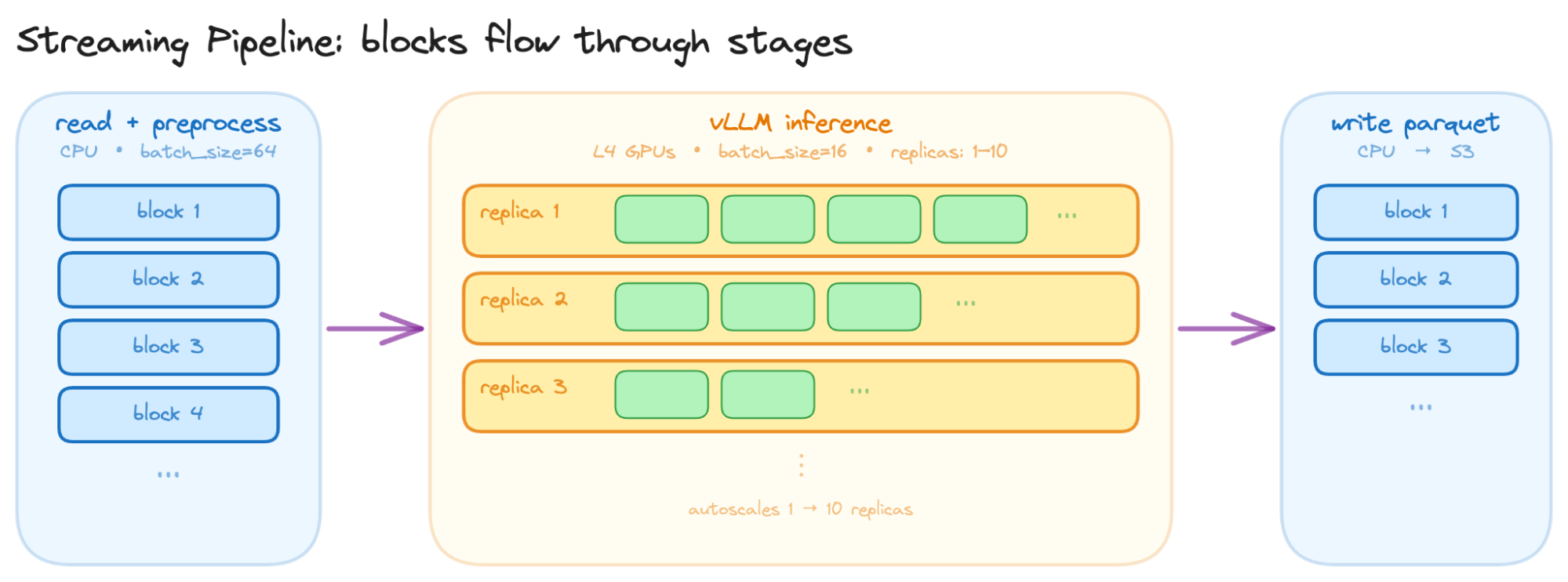

A Ray Data pipeline is a DAG of operators. Data enters as blocks (partitions of rows), each operator transforms blocks and passes them to the next. Execution is streaming: an operator begins emitting output blocks as soon as it has processed input, without waiting for the entire upstream to finish.

LinkA concrete pipeline

Captioning a million images with a vision-language model illustrates the structure:

import ray

from ray.data.llm import build_llm_processor, vLLMEngineProcessorConfig

# Read raw images from object storage

ds = ray.data.read_images("s3://bucket/raw-images/", include_paths=True)

# Stage 1 (CPU): decode, resize, build model input schema

# Input: {"image": bytes, "path": str}

# Output: {"messages": [...], "sampling_params": {...}}

ds = ds.map_batches(

preprocess,

batch_format="pyarrow",

batch_size=64,

)

# Stage 2 (GPU): run VLM inference on L4 GPUs

# 10 actor replicas, each holding a loaded model

# ActorPoolStrategy keeps models in memory across batches

processor_config = vLLMEngineProcessorConfig(

model_source="Qwen/Qwen2.5-VL-3B-Instruct",

# vLLM engine configuration

engine_kwargs=dict(max_model_len=8192),

# batches per inference call

batch_size=16,

# GPU resource request

accelerator_type="L4",

# Autoscaling actor pool of replica

compute=ActorPoolStrategy(min_size=1, max_size=10, initial_size=5),

)

processor = build_llm_processor(processor_config, preprocess=None, postprocess=postprocess)

ds = processor(ds)

# Stage 3 (CPU): write results

ds.write_parquet("s3://bucket/captions/")Each stage declares its own resource requirements and batch size. The scheduler uses these annotations to place tasks and actors on the right nodes - the user doesn't map workers to machines. Note that Ray’s cluster autoscaler handles the heterogeneous node provisioning automatically requesting the right resources from the underlying compute provider.

A few things worth noting about what this pipeline does not require:

No DSL or DAG definition language. Stages are composed in plain Python - standard control flow, no configuration files or graph builders.

No code rewriting. User-defined functions like

preprocessare ordinary callables that operate on NumPy arrays, Pandas DataFrames, or PyArrow tables - the same code you would run in a script or notebook.No per-stage containers or orchestrator. There are no separate container images to build for each stage and no workflow orchestrator to glue them together.

No manual stitching with vLLM. Ray Data provides an integration with vLLM that handles continuous batching, KV-cache management, and tensor-parallel sharding out of the box, configurable via the

engine_kwargsfield.

This makes the migration from a small-scale script or prototype to production a matter of resource annotation, not a major code or infrastructure overhaul.

LinkDynamic resource scaling

How this differs from pure streaming systems. Stream processors like Flink also pipeline data between stages without intermediate materialization. But they typically allocate a fixed set of resources to each operator at deployment time. If the preprocessing stage needs more CPU, you reconfigure and redeploy.

Ray Data's approach is different: resource allocation is dynamic. The streaming executor continuously monitors operator throughput and adjusts resource budgets across operators to maximize end-to-end throughput. If preprocessing falls behind and GPUs are starving for data, more CPU budget shifts to preprocessing; if postprocessing or writing becomes the bottleneck, CPU budget rebalances there. The goal is to keep throughput maximized at all times. This is not manual rebalancing - it is a runtime scheduling loop.

LinkDynamic partitioning

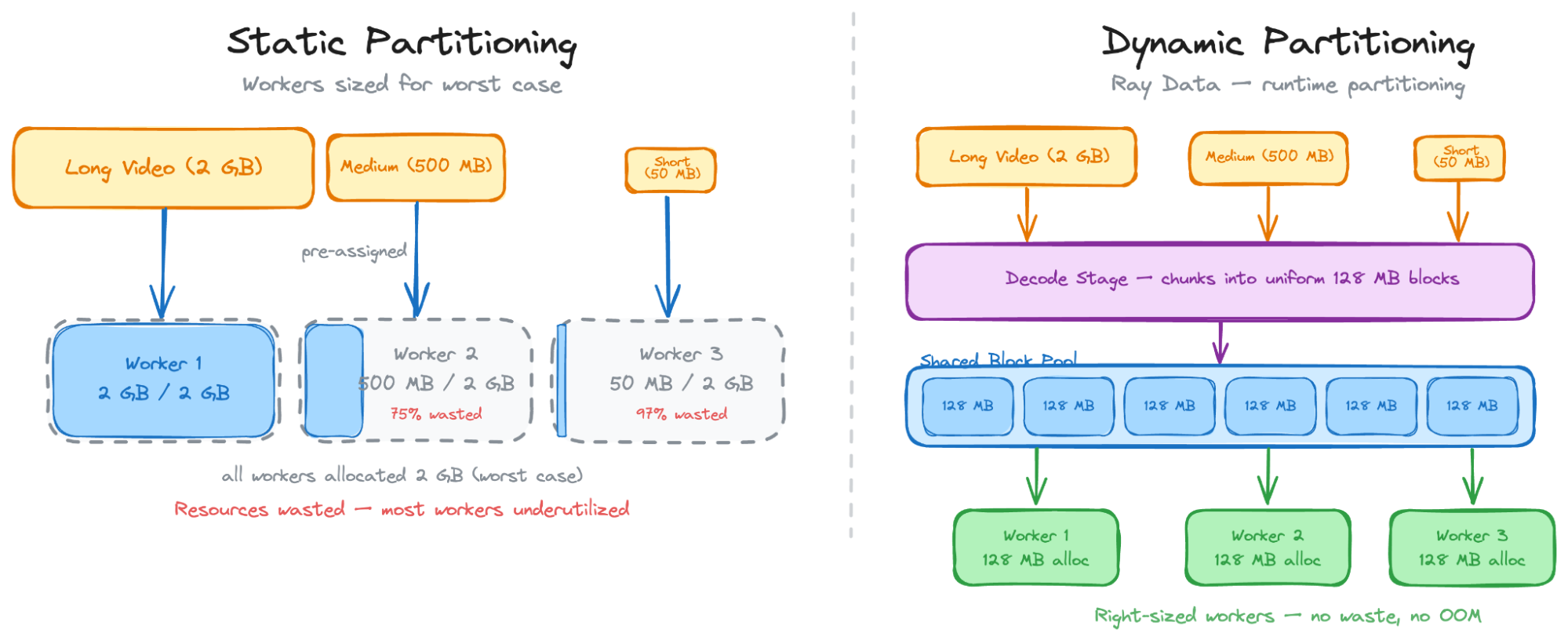

Batch systems fix the partition plan before execution, and some might naively partition based on the number of files on disk. A video that decodes into 3x more frames than expected blows memory; a partition of short clips leaves its worker idle.

Ray Data defers partitioning to runtime: each stage flushes a new partition when its buffer hits a target size (128 MB by default), based on actual in-memory size. Workers pull from a shared pool rather than processing pre-assigned shards - fast workers pull more, slow workers don't hold back the pipeline.

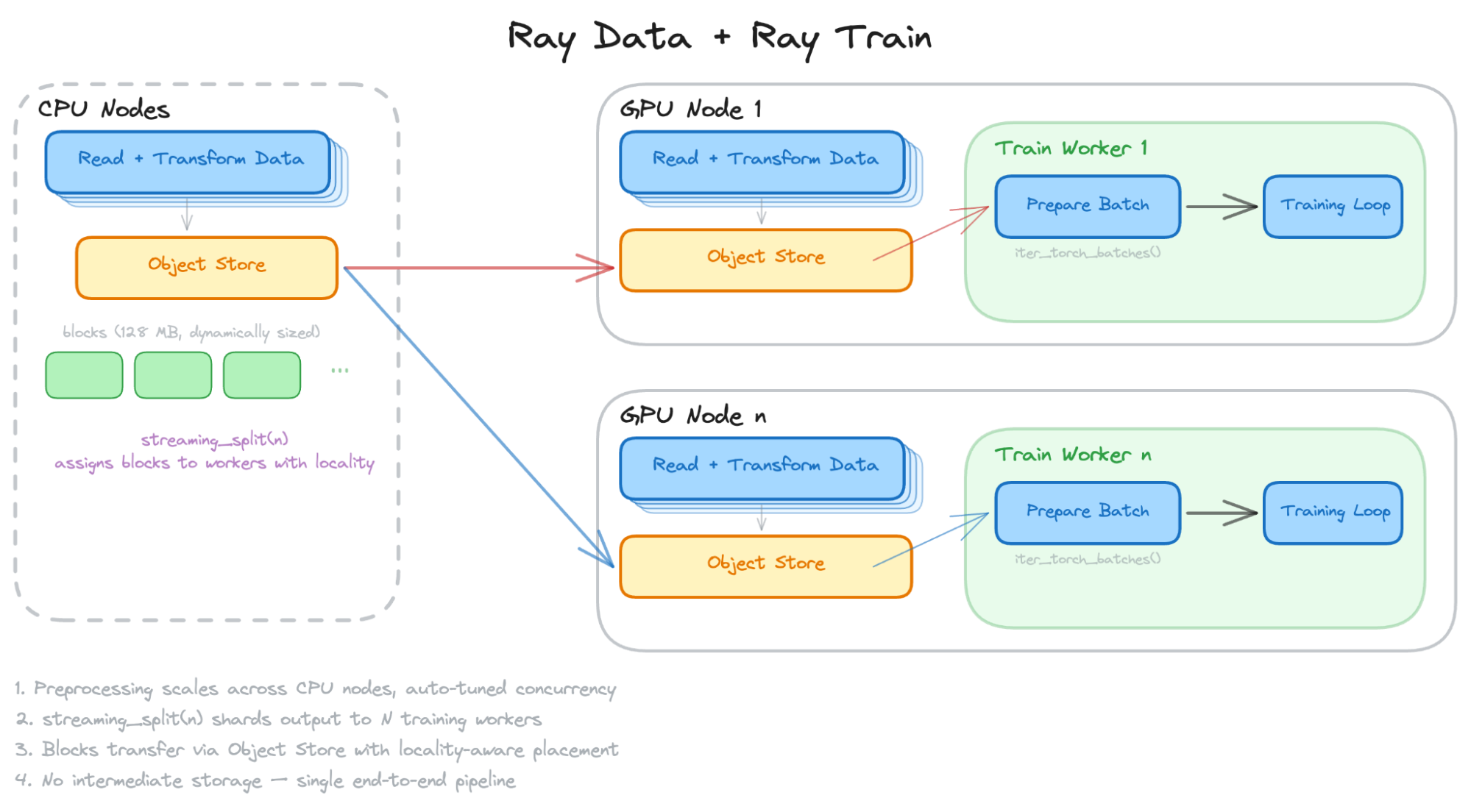

LinkStreaming into training

For training workloads, Ray Data offers a streaming split to automatically shard the pipeline output into N live iterators - one per training worker (DDP, FSDP, DeepSpeed) - without materializing the dataset. A coordinator assigns partitions to workers with locality awareness, and each worker receives formatted tensors. The full path - read, preprocess, split, train - is a single pipeline with no intermediate storage.

LinkBackpressure and memory management

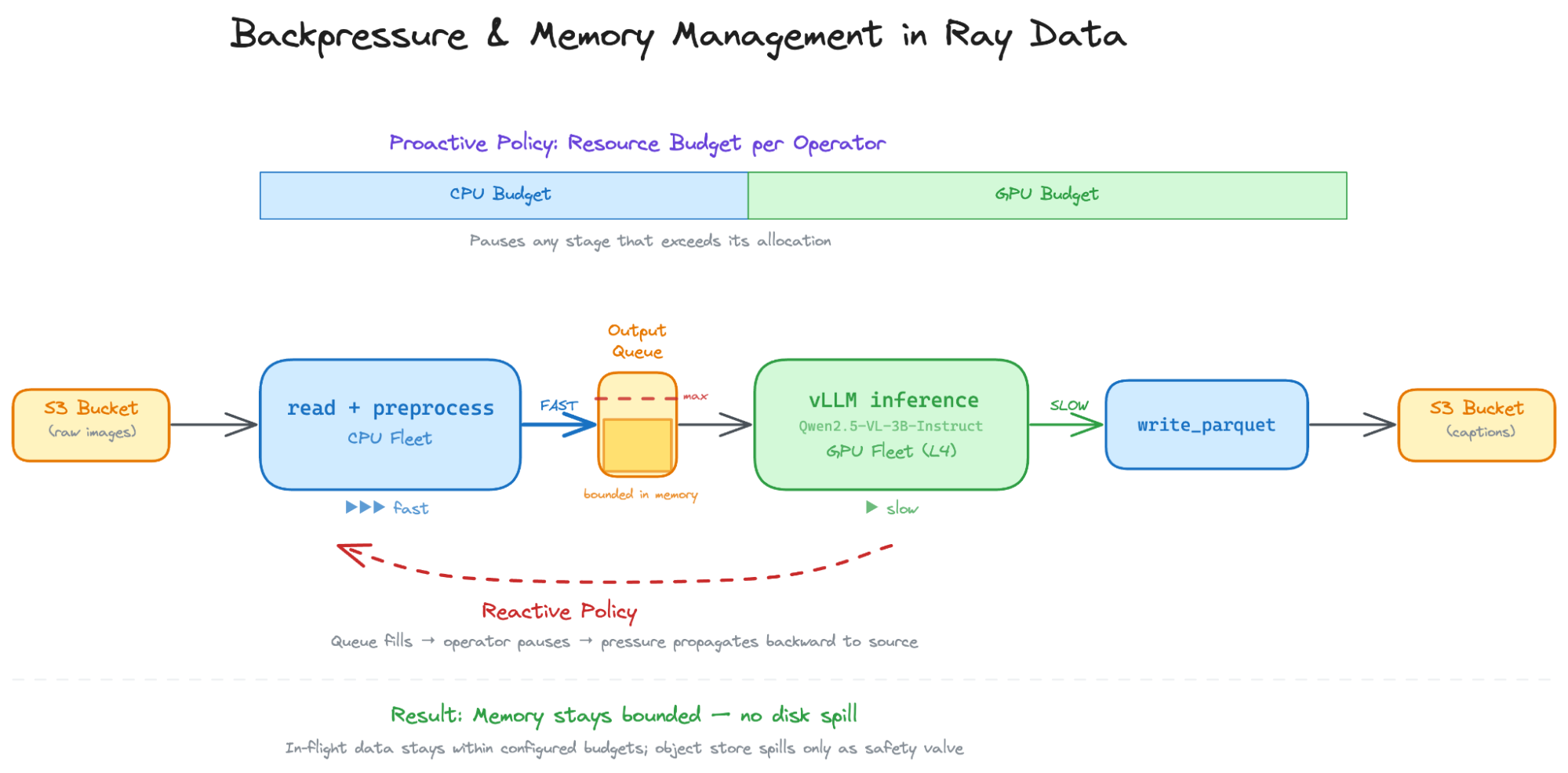

The disaggregated streaming model has an operational problem that is easy to overlook: what happens when the stages run at different speeds?

The problem. CPU preprocessing is often faster per-byte than GPU inference - especially when the GPU stage involves a large model. Without coordination, the CPU fleet decodes and streams data faster than the GPU fleet can consume it. Processed batches pile up in memory and eventually spill to disk slowing down the pipeline significantly.

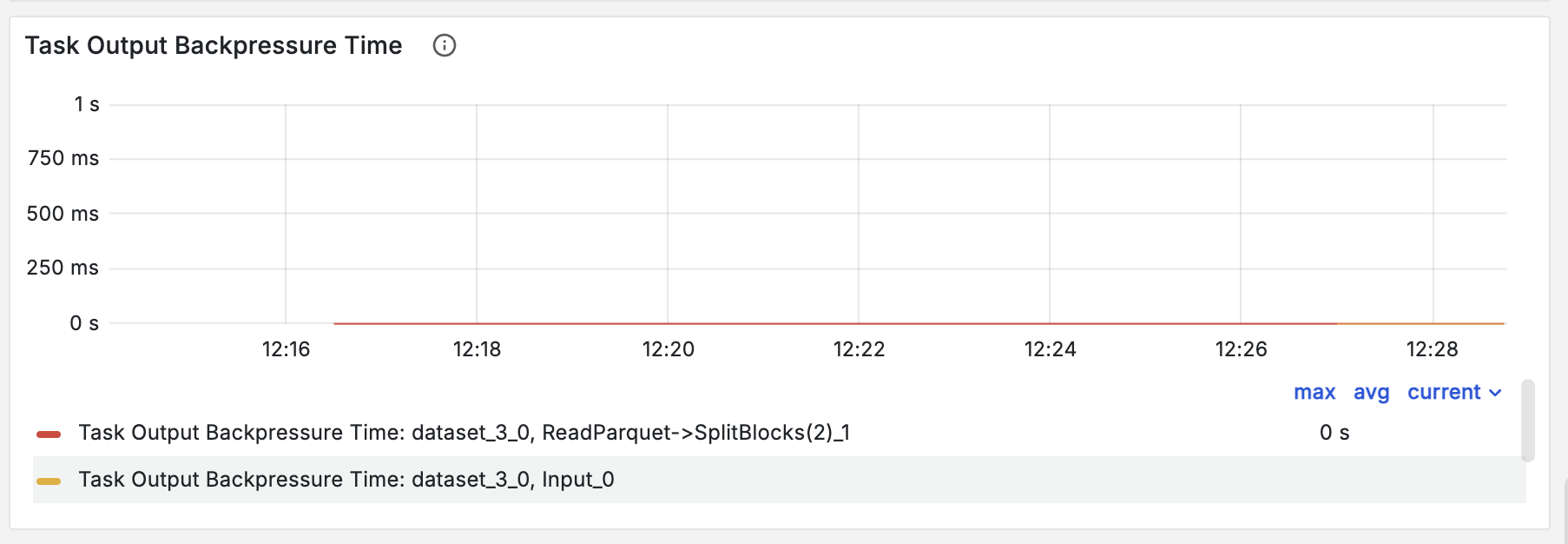

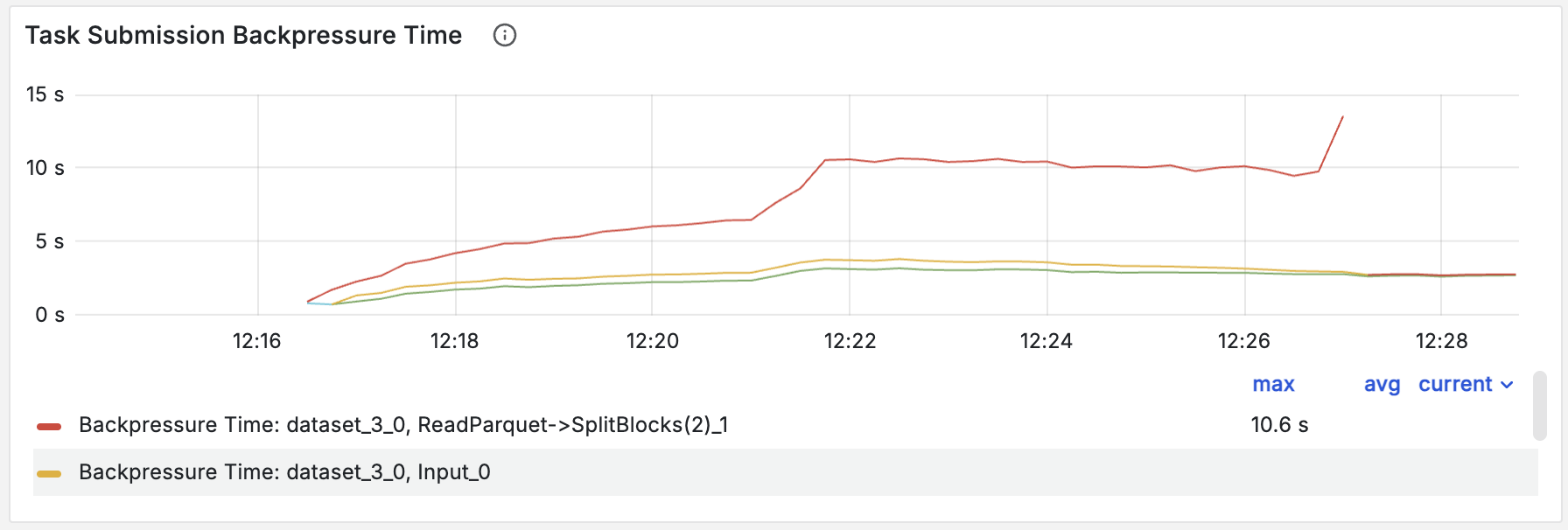

How Ray Data handles it. Ray Data applies two complementary backpressure policies. A proactive policy divides the cluster's resource budget across stages and pauses any stage that exceeds its allocation. A reactive policy monitors each operator's output queue relative to downstream capacity - when output accumulates faster than it can be consumed, the operator pauses and pressure propagates backward through the DAG, all the way to the data source.

Together, these policies keep memory bounded without manual tuning. Ray's object store will spill to disk under memory pressure as a safety valve, but in a well-functioning pipeline this should not happen - in-flight data stays within the configured budgets and intermediates never touch disk.

LinkObservability

A streaming pipeline over hundreds of TB will run for hours or days. Knowing where the bottleneck is - and whether backpressure is working - is not optional.

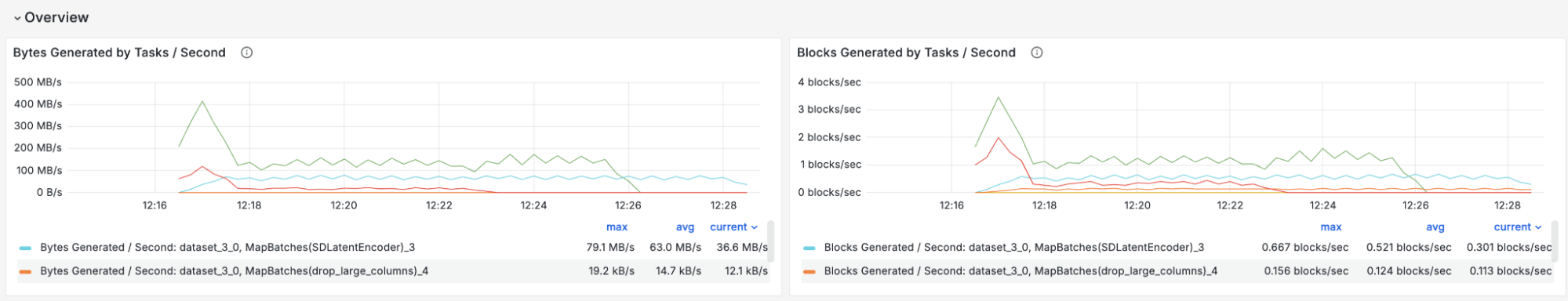

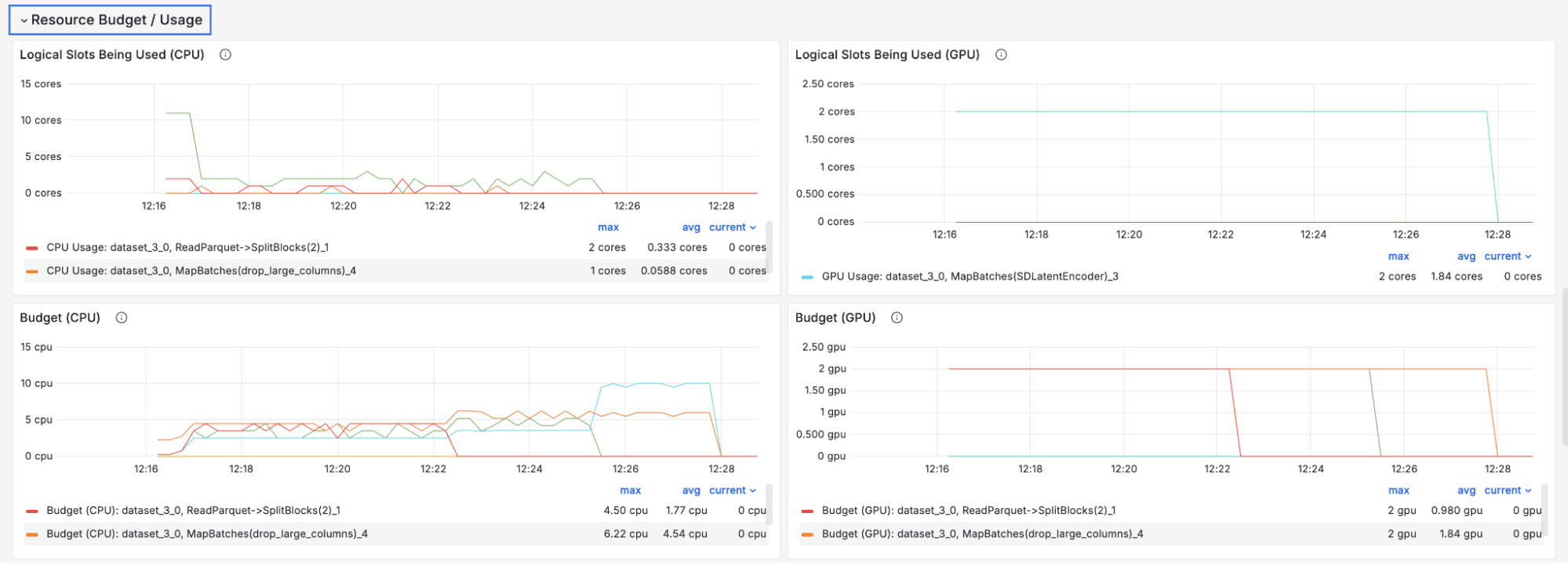

Ray Data exposes per-operator metrics in real time such as:

Throughput (in rows/bytes/blocks per second)

Resource budget and usage (memory, CPU/GPU cores used vs allocated)

Backpressure information (time spent backpressuring pausing task outputs or submitting new tasks)

These are available as Prometheus metrics and in a dashboard that shows execution progress per operator, making it straightforward to identify which stage is the bottleneck, whether memory pressure is causing spill, and whether the resource budget allocation is effective.

Link5. Closing: where the math lands

The gap between what GPUs can consume and what an upstream pipeline can produce is real and large. The execution model is how you close it. The properties that make disaggregated streaming work - streaming execution, dynamic partitioning, heterogeneous resource scaling, memory-aware backpressure - are not optimizations. They are necessary. Remove any one and the pipeline either wastes GPUs, crashes on memory, or bogs down on skewed data.

The results bear this out: across heterogeneous batch inference workloads, Ray Data improves throughput by 2.5–12x compared to staged batch (Spark) and stream processing (Flink) systems. A video classification workload (VideoMAE on Kinetics-700-2020: 64,535 videos, 137 GB from S3, 4 nodes each with 1 A10G GPU) shows both ends: Spark takes 116 minutes and produces no output for the first 53, forced to spill all intermediates between stages; Ray Data finishes the same job in roughly 10 minutes at 88% of the theoretical GPU maximum, a 2.5x throughput gain over Flink.

On a Stable Diffusion pre-training run over 2 billion images, a disaggregated cluster (40 A10G nodes for CLIP encoding and preprocessing, plus A100 nodes for UNet training with FSDP) raised throughput from 2,811 to 4,075 images/second and cut wall-clock time from 111 hours to 77 hours: a 31% reduction compared to a single-node PyTorch DataLoader. CLIP encoding moves off the expensive A100 nodes onto cheaper A10G nodes, preprocessing and training run concurrently rather than sequentially, and the A100s spend no cycles on CPU-bound decoding. See this blog post for a detailed breakdown of the results.

These are not theoretical gains. ByteDance runs multimodal batch inference over 200 TB of image and text data per job, embedding content into a shared vector space using a 10-billion-parameter vision-language model pipeline-sharded across GPUs. Pinterest processes images through dozens of embedding models daily to power visual search across billions of pins. Notion migrated its entire embeddings pipeline - text chunking on CPUs pipelined with GPU-based embedding generation - from Spark to Ray, anticipating a 90%+ reduction in infrastructure costs.

Other major AI infrastructure organizations have independently converged on the same architecture: NVIDIA's NeMo Curator ships a native Ray Data executor that drives its processing stage, alongside a separate backend for Cosmos Xenna, a Ray-based pipeline library that manages its own actor pools and backpressure. Alibaba's Data-Juicer, which has processed 70 billion samples on 6,400 CPU cores, uses Ray as its distributed backend.

As models get larger and data gets more heterogeneous, these properties become more important, not less. The design question is not "how do we make storage faster" but "how do we architect the pipeline so the most expensive resources in the cluster are never waiting."

LinkFurther reading

Streaming Batch: A New Execution Model for Heterogeneous Data Processing - Luan, Wang et al., 2025. The paper describing the streaming batch execution model and its benchmarks against Spark and Flink.

GPU Inefficiency in AI Workloads - Analysis of GPU utilization in production ML pipelines.

Table of contents

- 1. The problem: feeding GPUs at scale with multimodal data

- 2. Two naive architectures and why they break

- The cost of staged batch execution

- Why single-node execution is suboptimal

- 3. Disaggregated streaming

- What primitives does disaggregated streaming require?

- 4. Ray Data's streaming batch execution

- The streaming batch model

- A concrete pipeline

- Dynamic resource scaling

- Dynamic partitioning

- Streaming into training

- Backpressure and memory management

- Observability

- 5. Closing: where the math lands

- Further reading